On November 24, 2025, Anthropic and Snowflake announced that Claude Opus 4.5 is now available to organizations via Snowflake Cortex AI.

It implies that data teams can deploy “premium frontier” intelligence without data ever leaving their security perimeter.

Why This Release Matters Now

Anthropic’s latest Opus generation arrives at a moment when enterprises are accelerating AI adoption but remain wary of data movement, security exposure, and fragmented infrastructure.

By situating Opus 4.5 directly inside Snowflake Cortex cAI, organizations gain access to a sophisticated reasoning model with minimal architectural overhead. This way, no separate API provisioning, no egress costs, and no duplicated governance layers are needed.

The rollout also aligns with a broader industry pivot toward “AI-inside-the-data-platform” architectures. Here, LLMs sit closer to the systems that store operational and analytical data.

For enterprises with complex compliance requirements, that proximity is not simply a convenience; it’s increasingly a prerequisite.

What Claude Opus 4.5 Brings to Snowflake Cortex AI

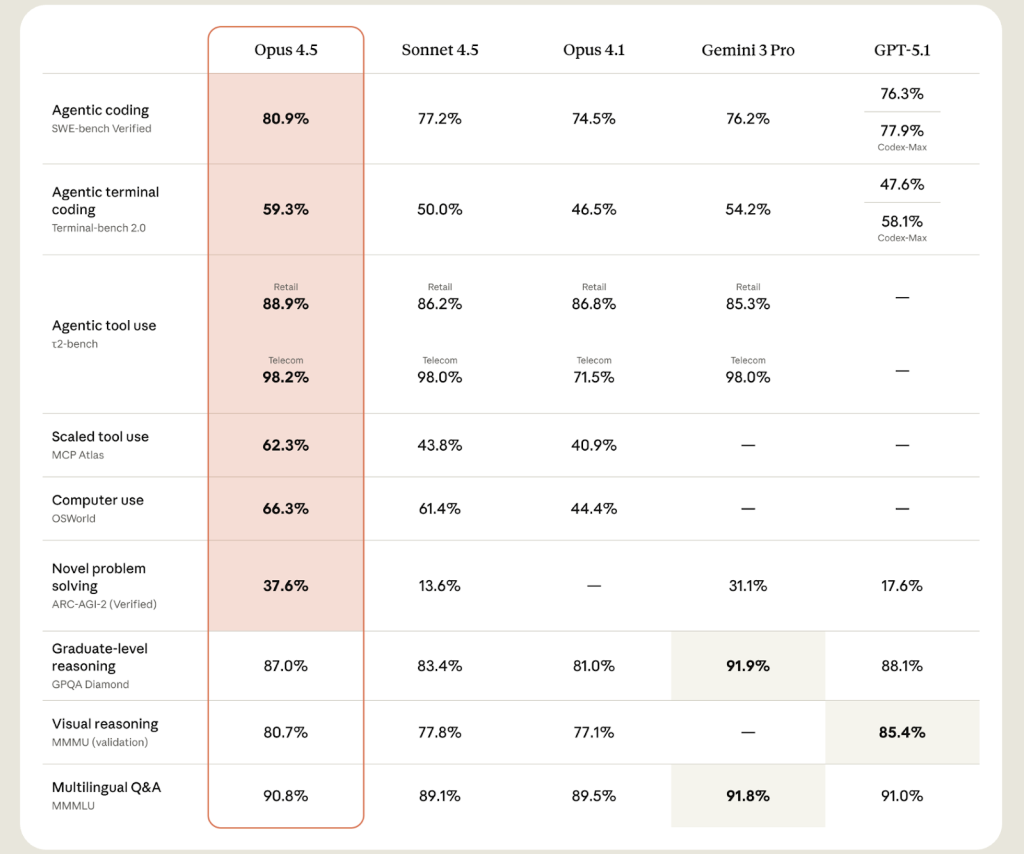

Claude Opus 4.5 is Anthropic’s new “premium frontier” model and its most capable to date, targeting hard problems in coding, tool use, and long-horizon reasoning.

Key traits relevant in a Snowflake context include:

Frontier Performance (And a Context Window to Match)

Opus 4.5 is positioned as the “premium frontier model” of the Claude family. Compared to predecessors (Opus 4.1, Sonnet 4), 4.5 reportedly brings stronger reasoning, coding, and agent-level performance,

Notably, like other recent Claude-4 variants, Opus 4.5 supports extensive context lengths. This makes it better suited for analyzing long documents, orchestrating multi-step processes, and sustaining agentic workflows.

Enterprise-Friendly Deployment and Tooling

Through Cortex AI Functions (e.g., AI_COMPLETE, AI_SUMMARIZE, etc.), Opus 4.5 becomes accessible via simple SQL calls or API endpoints. It is a huge win for teams that don’t want to build bespoke LLM infrastructure from scratch.

And because it runs within Snowflake’s managed, governed environment, enterprises retain familiar security, compliance, and data-governance controls. This mitigates one of the biggest frictions when deploying LLMs on sensitive or regulated data.

Price Drop

Snowflake customers now gain access to a premium-tier model at an unexpectedly aggressive cost.

Opus 4.5 is Anthropic’s new premium frontier model—its most intelligent yet—and it outperforms both Sonnet 4.5 and Opus 4.1 across coding, agents, computer use, and office tasks.

Despite the performance jump, it debuts at a price point that is three times cheaper than Opus 4.1.

The pricing shift has major implications:

- Broader accessibility: Advanced reasoning becomes financially viable for mid-market organizations and larger workloads.

- Lower experimentation risk: Data teams can prototype multi-step or agentic workflows without prohibitive costs.

- Competitive pressure: Other AI vendors may be forced to re-examine pricing models across top-end tiers.

Opus 4.5 effectively redefines what “premium” means in enterprise AI pricing.

How Opus 4.5 Lives Inside Snowflake Cortex AI

Snowflake is exposing Claude Opus 4.5 along three main surfaces:

- Cortex AI Functions

- Cortex REST API

- Snowflake Intelligence, all within Snowflake’s security perimeter (coming soon)

Let’s understand this in more detail:

Cortex AI Functions:

The immediate value lies in accessing Opus 4.5 through Cortex AI Functions. You can now embed complex reasoning directly into data pipelines using the AI_COMPLETE function (formerly COMPLETE).

Example

Scenario: A DevOps team wants to categorize thousands of server error logs and suggest fixes without writing Python scripts.

sql

| SELECT server_id, error_log, — Call Claude Opus 4.5 to analyze logs directly in the query SNOWFLAKE.CORTEX.COMPLETE( ‘claude-opus-4.5’, CONCAT(‘Analyze this error log. Classify the severity (High/Medium/Low) and suggest a 1-sentence fix: ‘, error_log) ) AS remediation_advice FROM system_logs WHERE event_date = CURRENT_DATE(); |

Cortex REST API

Application teams can tap Opus 4.5 with low latency, add prompt caching to reduce costs on repetitive workloads, and combine it with enhanced tool-calling to drive more complex, multi-step workflows.

Example

Scenario: A legal tech app sends a contract snippet to Snowflake for analysis to ensure the sensitive text never leaves the governed environment.

json

| POST /api/v2/cortex/inference { “model”: “claude-opus-4.5”, “messages”: [ { “role”: “user”, “content”: “Identify any indemnity clauses in this attached contract section that violate GDPR compliance.” } ], “temperature”: 0.0 } |

Snowflake Intelligence

Snowflake Intelligence is the new “agentic” layer where Opus 4.5 will soon power conversational interfaces.

Instead of writing code, users ask questions, and Opus 4.5 orchestrates the necessary SQL queries and tool calls behind the scenes.

Watch Snowflake Intelligence in action here:

Use Cases: What It Means for Key Stakeholders

Beyond the launch headlines, the real story is how this changes day-to-day work for teams already invested in Snowflake.

For Data Engineers/Analytics Teams

You now have access to a high-capability LLM without wrapping extra ETL pipelines or exporting data to external “AI sandboxes.” It reduces complexity, accelerates Snowflake development, and retains full governance within Snowflake.

For CTOs/Platform Architects

The integration strengthens the “data cloud + AI cloud” convergence trend. Snowflake + Anthropic becomes a foundation for building AI-driven data products, with fewer moving parts and lower risk exposure.

For Line-of-Business Leaders

The entry barrier for AI-powered insights and automation has just been lowered. Business users can potentially leverage advanced LLM capabilities (summaries, reports, agentic tasks) via SQL or built pipelines—without requiring deep ML or data science expertise.

For Governance/Compliance Teams

Because data doesn’t leave the Snowflake perimeter, security, access control, audit trails, and compliance policies remain intact. It is a non-trivial win in sectors like finance, healthcare, manufacturing, and regulated industries.

From Aegis Softtech’s Lens: A Realistic Leap

Opus 4.5 is launching across multiple major platforms, including Google’s Vertex AI and Amazon Bedrock. The shift underscores Anthropic’s strategy to be available wherever enterprises already build.

Snowflake’s same-day availability as a launch partner signals the depth of the collaboration between the two companies, which already highlighted Claude’s role in powering Cortex Analyst and related capabilities.

From an enterprise buyer’s perspective, the differentiator is not just “does this platform have Opus 4.5?” but “how tightly is it wired into my data, security, and workflow fabric?”

Snowflake bets that keeping both data and AI execution inside the AI Data Cloud reduces integration friction, avoids data sprawl, and simplifies compliance.

For teams that already trust Snowflake for data sharing, storage, analytics, and governance, and who have the appetite for embedding AI deeply in their workflows, this is a compelling offering.

Whether the rest of the market follows remains to be seen.