Looking to build robust, production-ready Node.js apps? Google Cloud provides the ideal infrastructure for taking your Node architectures to the next level.

Node.js is popular for fast, efficient web apps thanks to its async event model. However, realizing its full potential requires an equally high-performing cloud backend. This is where Google Cloud shines as the perfect complement to Node's strengths.

With managed services, auto-scaling, and edge caching, Google Cloud unlocks the next level of Node's speed and scalability. Many organizations combine Google Cloud’s capabilities with NodeJS Application Development Services to deliver high-performance, scalable applications. This guide provides 10 architectural tips and patterns for building killer Node.js apps on Google's infrastructure.

We'll cover optimizing deployment configurations, improving throughput, ensuring resilience, integrating managed services, and more. These proven methods will elevate your Node stack to enterprise-grade quality.

Let's embrace the 10 killer strategies now!

1. Right-size Node VMs

Optimizing the sizing of Node.js virtual machines on Compute Engine is crucial for balancing cost and performance. The main tips are:

Use pre-emptible VMs for workloads with variable traffic patterns or frequent spikes.

These instances cost less but may be terminated with little notice when capacity is needed elsewhere. This makes them ideal for more budget-focused batch jobs or dev/test environments.

Leverage custom machine types to fine-tune the exact amount of vCPUs and memory allocated.

This allows precision sizing between preset configurations to closely match current resource needs. Monitor utilization metrics and scale up or down as demand evolves.

Scale CPU and memory quotas independently based on monitoring which resource is under the highest load. Often CPU or memory will be the primary bottleneck. Boosting the constrained resource specifically improves performance at lower cost.

Monitor key instance metrics like CPU utilization percentage, network traffic, and memory usage regularly.

Optimally, average CPU usage should sit around 60% to 70% at peak to leave headroom for spikes. Set alerts for high thresholds. Analyze trends to the right size.

Taking the time to right-size VMs avoids significant overspending on over-provisioned instances while still providing the necessary performance for smooth end-user experiences.

Right-sizing is an iterative process as traffic patterns change but pays continuous dividends long-term.

2. Enable Auto Scaling

Auto Scaling enables automatically growing or shrinking Compute Engine groups based on demand. Key tips:

Define intelligent auto-scaling policies tailored to how your traffic volume fluctuates. Base rules on metrics like requests per second, network usage, CPU utilization, or queue size.

Ensure the minimum VM capacity in the group remains sufficient to handle typical daily peaks in traffic to maintain performance. Avoid scaling down too aggressively.

Set reasonable maximum VM limits to prevent runaway auto-scaling costs during traffic spikes. Scale up conservatively to control costs.

Distribute load across instances using a load balancer. Autoscale the groups independently based on their assigned traffic.

Smooth auto-scaling ensures your Node.js apps dynamically scale up computing resources to handle increasing loads without manual intervention. This maintains speed and responsiveness seamlessly even as your user base grows.

3. Distribute Requests with a Load Balancer

A load balancer is a key way to improve performance and resilience by spreading incoming app traffic across your backend servers. This prevents overloading any single Node.js instance.

First, you need to create a cloud load balancer within Google Cloud. Be sure to put it in the same region and network as your app servers so traffic can reach them. External requests will hit the load balancer's IP address.

Next, you add the groups of virtual machines running your Node.js app code to the load balancer's backend pool. The load balancer can then distribute traffic to these groups.

An advantage is that you can add VM groups in different zones for redundancy. If one zone goes down, traffic reroutes to another.

You can also define rules to route specific types of requests to certain groups if needed.

For example, you may want to send traffic hitting the /API path to your API server group. And traffic to /static could go to servers optimized for static asset delivery.

The key benefit of the load balancer is preventing any Node.js instance from being overwhelmed. If a server crashes or is slow, the load balancer redirects traffic to other healthy servers. This prevents downtime.

Scaling your VM groups independently is easy behind the load balancer. It will route the right amount of traffic to each group based on rules.

Overall, load balancers stop one poor-performing node from affecting the full app. They also allow you to grow your capacity easily.

4. Cache Assets Using a CDN

A content delivery network (CDN) is another useful service for optimizing Node.js app performance. It works by caching static assets globally.

The easiest option is to serve files like images, CSS, and JS from a Cloud Storage bucket. For more control, use Cloud CDN and its advanced edge caching capabilities.

The CDN has edge locations around the world that cache copies of your static assets closer to end users. So when users request those files, they are served from a nearby CDN cache, not your faraway app servers. This results in much faster access.

You can set cache expiration headers on your static files to control how long the CDN caches keep them before refreshing from the backend. Test different expiration times to optimize caching.

The key benefit of a CDN is it reduces load and traffic on your Node.js app servers. With static content handled separately, your servers focus on dynamic requests.

CDN caching also improves perceived performance by users due to faster local asset delivery.

Using a CDN avoids potential congestion when assets are only served by your servers.

For Node.js apps with significant static resources, a CDN is a valuable performance investment.

5. Choose the Optimal Database

Choosing the best-managed database for your NodeJs application development depends on your data patterns and use cases:

For relational data requiring ACID transactions and consistency guarantees, use Cloud SQL. It offers managed MySQL, Postgres, and SQL Server.

For more flexible NoSQL document storage with real-time synchronization across devices, Firestore is ideal. Data is stored in JSON-like documents organized into collections.

If your use case involves massive volumes of analytics data, like log analysis, time series, or IoT sensor streams at terabyte+ scale, Bigtable is a high-performance NoSQL option.

For ultra-fast in-memory caching or real-time analytics, Memory store offers managed redis with sub-millisecond latency and high throughput.

Evaluating access patterns, transaction needs, and scalability requirements helps determine the optimal Google database for your app's backend, leading to great performance.

6. Use Pub/Sub for Real-Time Messaging

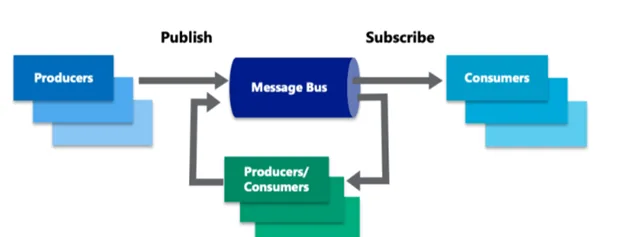

Publish/Subscribe is a scalable messaging service that allows separate apps and Microservices to communicate in real-time:

Asynchronous messages can be published to a Pub/Sub topic by applications. The topic updates can be listened to by a large number of subscribers.

Publishers do not need to know anything about their subscribers. Pub/Sub's decoupling enhances scalability and modularity.

Subscribers receive messages pushed to topics of interest based on their configured subscriptions. At least one execution is guaranteed, with retry possibilities to manage temporary problems.

Even during traffic surges and growth, Pub/Sub queues and reliably distributes huge volumes of messages and event streams. Globally, the infrastructure auto-scales.

Pub/Sub facilitates effective decoupled communication between discrete services in Node.js Microservices systems.

When compared to direct API queries between Node services, the lightweight message queue approach is more scalable and dependable.

A user management service, for example, publishes events when a user is created or edited. The email service registers for these occurrences and replies by sending confirmation emails.

The services are detached, so the user service does not need to interface with the email service directly. Pub/Sub allows for straightforward broadcast communication.

Pub/Sub also saves events for later viewing when subscribers are unavailable. Even if services fail, this prevents data loss.

Other applications include streaming analytics, IoT device messaging and task distribution.

7. Build Microservices on Kubernetes

Kubernetes provides a powerful platform for building Node.js applications using distributed Microservices:

Large monolithic Node apps can be broken down into smaller independent services by business domain. For example, a user management service is separate from an accounts service.

Microservices are individually developed, scaled, and updated. This increases agility and the ability to iterate quickly.

Services interact via network API requests, coordinated by the Kubernetes API. Pub/Sub messaging further decouples cross-service communication.

This Microservices architecture development improves modularity, flexibility and resilience. If one service has issues, the app overall still functions.

Kubernetes handles deploying containers for each service, networking them together, managing scaling, and orchestrating availability. This automates significant complexity.

For large Node apps, Microservices aligned within a Kubernetes environment aid scalability, velocity of change, and uptime.

8. Run Automated Node CI/CD Pipelines

Automating deployments through continuous integration / continuous delivery (CI/CD) improves application reliability and speed of iteration:

Code changes committed trigger automated builds. The CI pipeline runs test suites to validate the changes.

On success, CD deploys the latest verified code changes to staging and production environments automatically.

If tests fail or crashes occur, automated rollbacks revert code to the last known good version.

For Node, pipelines can leverage tools like Jenkins, CircleCI, TravisCI, GitHub Actions, and more to build workflow automation.

CI/CD results in much faster and lower-risk application updates. Automation enables continuously integrating improvements.

Developers can focus on coding rather than slowly pushing changes manually. Automation speeds everything up.

Pipelines check for regressions quickly with every change. Code is consistently production-ready thanks to extensive testing.

Investing in CI/CD discipline prevents bugs and accelerates Node app improvement through reliable automation.

9. Monitor App Health

Robust monitoring and alerting provide crucial visibility into production application health to quickly diagnose issues:

Stackdriver aggregates metrics, events, traces, and logs in one unified dashboard. It should be enabled for all Node instances.

Configure alerts on key metrics like error rates, latency, traffic changes, and saturation to notify proactively of potential problems.

Analyze trends in usage, performance, infrastructure saturation, and inter-service communications to identify bottlenecks for optimization.

When incidents do occur, aggregated Stackdriver logs quickly pinpoint the root cause for rapid remediation. Debugging is streamlined.

Without monitoring, application issues easily go unseen until users complain. Monitoring allows for identifying and fixing subtle reliability and performance problems early.

Don't wait for users to report failures. Monitoring helps maintain continuous Node app health and customer experience - a must for production services.

10. Choose Serverless Where Appropriate

For lightweight, event-driven processes, Serverless platforms like Cloud Functions avoid overhead:

Code runs in response to event triggers like HTTP requests or pub/sub-messages. No underlying servers to provision and manage.

Automatically scales instances to precisely match demand without complex capacity planning. No over-provisioning is required.

Pay only for actual execution time consumed rather than maintaining servers 24/7. Costs scale linearly with usage.

Integrates natively with other services like cloud storage, databases, and analytics. Reduces integration complexity.

Efficiently run parallel background processes and workflows that are bursty or sporadic. Serverless simplifies event-driven computing.

For Node, Serverless functions work well for offline tasks, data processing, lightweight APIs, and more. Removes server management overhead.

By leveraging these 10 proven architecture strategies on Google Cloud, you can build Node.js apps that provide elite performance, availability, and scalability.

Optimizing your platform for Node's strengths takes apps to the next level.

Have you used any other Google Cloud tips for Node? Share your architectural wins below!