Your analytics are only as good as the data feeding them.

Today, businesses pull information from dozens, sometimes hundreds, of systems. Raw data rarely arrives in a neat, analysis-ready format.

It’s messy. Inconsistent. Spread across SaaS apps, transactional databases, IoT sensors, and cloud platforms. Left unchecked, that chaos trickles into your dashboards and decision-making.

This is why ETL in data warehousing exists. It’s the behind-the-scenes process that takes scattered, unstructured inputs and turns them into clean, structured, high-quality datasets your teams can actually trust.

In technical terms, ETL (Extract, Transform, Load) is the structured pipeline that:

- Extracts data from multiple, heterogeneous sources.

- Transforms it into a unified format that meets your business rules.

- Loads it into a data warehouse where analytics, BI tools, and AI models can consume it.

Think of it as the plumbing and filtration system for enterprise analytics—without it, insights are either delayed, distorted, or downright dangerous.

We’ll break down the core ETL concepts in modern data warehousing in this blog. We also discuss how ETL fits into today’s data lifecycle and hybrid architectures, the differences between ETL and ELT, and when each approach makes sense.

Key Takeaways

- ETL (Extract, Transform, Load) converts raw, scattered data into clean, analytics-ready datasets.

- Ensures data quality, consistency, and reliability across diverse systems.

- Modern ETL enables scalability, automation, and adaptability for BI, AI, and compliance.

- ETL fits traditional, high-compliance setups; ELT suits cloud-native warehouses like Snowflake and BigQuery.

- Tools like Databricks, Fivetran, and Airbyte are modernizing pipelines.

- Generative AI is driving self-healing, automated ETL workflows for faster, smarter data operations.

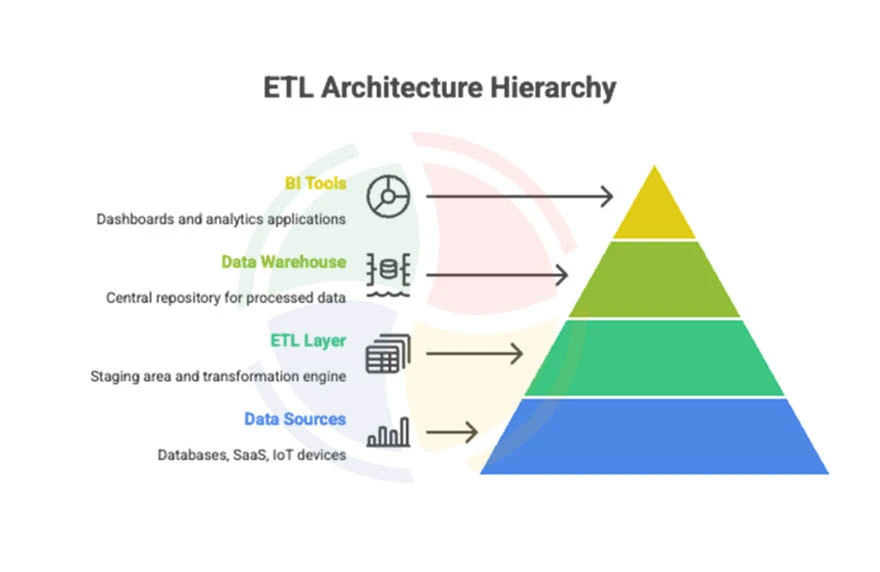

Core ETL Concepts in Data Warehousing

ETL is a quality assurance and readiness pipeline for analytics. In the context of data warehousing, ETL ensures that the information feeding your BI dashboards, machine learning models, and operational systems is consistent, accurate, and aligned with business needs.

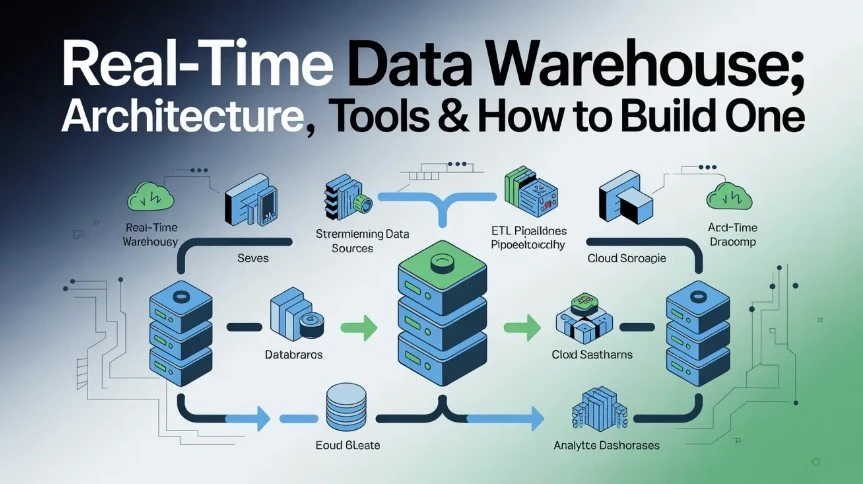

ETL in the Data Lifecycle

One of the most important things to remember here is that data doesn’t exist in isolation; it flows through stages.

A typical enterprise data lifecycle looks like this:

Ingestion → Processing → Warehousing → Analytics → Action

ETL sits squarely between ingestion and warehousing, acting as the translator, cleaner, and organizer of everything that enters your central repository. Without ETL, a data warehouse is just a storage unit; with ETL, it becomes a trusted source of truth.

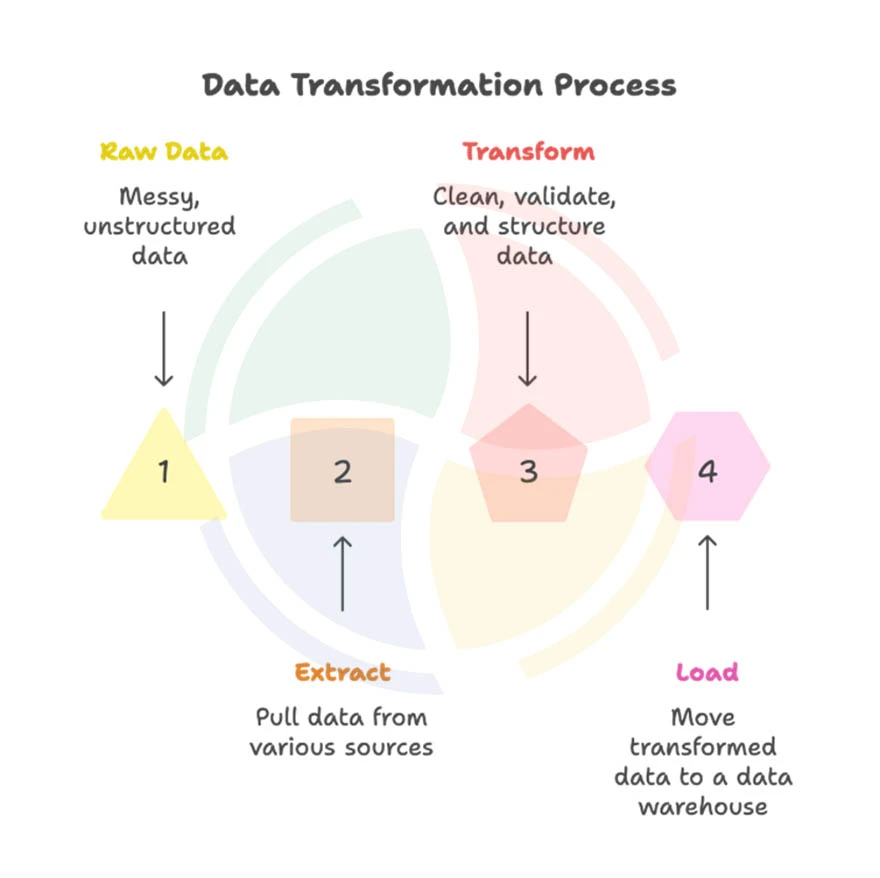

What is the ETL Process in Data Warehousing?

While the acronym is short, the process is anything but superficial. Each stage, Extract, Transform, Load, plays a distinct, non-negotiable role.

1. Extraction: Pulling Data from Multiple Sources

- Purpose: Gather data from various systems—ERP platforms, CRM tools, web applications, IoT devices, or cloud services.

- Key Operations: Connecting to APIs, reading from transactional databases, parsing log files, or ingesting streaming data.

- Example: A retail chain pulling sales data from POS systems, customer data from a CRM, and inventory data from an ERP for consolidated reporting.

2. Transformation: Converting Data into Suitable Formats

- Purpose: Clean, standardise, and reshape raw data to match the warehouse schema and business rules.

- Key Operations:

- Data cleaning: Removing duplicates, correcting errors, and filling in missing values.

- Schema mapping: Aligning data fields to match warehouse tables.

- Aggregations: Summarising transactional records for faster queries.

- Business rule application: e.g., currency conversion or tax calculations.

- Example: Transforming multi-currency sales records into a single reporting currency for global performance analysis.

3. Loading: Ingesting Processed Data into the Warehouse

- Purpose: Move the transformed data into the warehouse environment so it’s ready for query and analysis.

- Modes:

- Batch loading: Periodic updates–ideal for end-of-day reporting.

- Real-time streaming: Continuous updates–essential for live dashboards and operational decision-making.

- Example: Loading cleaned sales, inventory, and customer datasets into Snowflake to be used by Tableau dashboards.

Schema Mapping, Staging Areas, and Processing Modes

A strong ETL design often (always) includes a staging area–a temporary workspace where data lands before transformation. This allows for quality checks, reprocessing, and error handling without touching the source or warehouse directly.

You’ll also encounter batch vs real-time ETL:

- Batch: High-volume, scheduled updates (nightly, hourly).

- Real-time: Event-driven pipelines using technologies like Kafka or AWS Kinesis.

Choosing the right mix depends on your business’s latency tolerance, compliance requirements, and infrastructure.

Importance of ETL in Data Warehousing

Without ETL, a data warehouse is little more than an empty shell. The warehouse’s true value emerges only when its data is complete, accurate, and ready for analysis, and ETL is the process that makes that happen.

1. Ensuring Data Consistency and Quality

Data from different sources rarely match in structure or standards. One of your sales databases might store dates as MM/DD/YYYY, another as YYYY-MM-DD. Customer names could have inconsistent casing or trailing spaces.

ETL resolves these issues by applying standardization rules during the transformation stage, ensuring that what lands in the warehouse is uniform and reliable.

2. Enabling a Single Source of Truth

Businesses often operate with data silos, marketing, finance, operations, and customer support, each maintaining its own datasets. ETL merges these disparate streams into one integrated repository. This results in consistent metrics across departments, reduced reporting conflicts, and improved decision-making.

3. Supporting Business Intelligence & Advanced Analytics

Analytics platforms, machine learning models, and dashboard tools all rely on structured, cleaned data. By feeding high-quality inputs into the warehouse, ETL lays the groundwork for faster queries, more accurate models, and deeper insights.

4. Streamlining Compliance and Audit Readiness

With regulations like GDPR, HIPAA, and SOX, businesses must prove data accuracy, completeness, and security. ETL pipelines can embed data validation, masking, and lineage tracking, ensuring audit trails are intact and compliance risks are minimised.

5. Improving Performance and Scalability

Instead of overburdening operational systems with reporting queries, ETL moves the heavy lifting to a warehouse optimized for analytics. This separation enhances application performance while allowing the warehouse to scale as data volumes grow. When you partner with a professional data warehouse consulting company, the experts take care of all these things for your ETL ecosystem.

Benefits & Challenges of ETL

When viewed through the lens of data warehousing, ETL isn’t just a technical process—it’s the backbone of a business’s intelligence ecosystem. It ensures the right data is available, in the right format, at the right time.

Here are a few benefits and challenges of an ETL pipeline/system:

| Benefits | |

| Scalability | Cloud-native ETL solutions scale automatically to meet workload demands. |

| Repeatability | Automated workflows ensure consistent, reliable data processing. |

| Adaptability | Flexible transformation rules help ETL evolve with business and regulatory changes. |

| Challenges | |

| Managing Heterogeneous Data Sources | ETL unifies data from ERP systems, IoT devices, and cloud apps into a common, analysable structure. |

| Handling High Data Volumes & Complex Pipelines | ETL workflows process large-scale data efficiently while maintaining performance and reliability. |

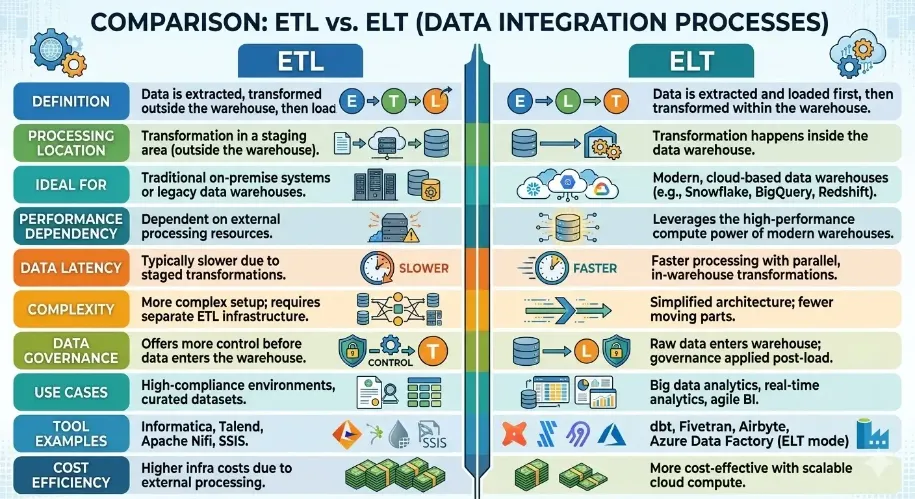

Difference Between ETL and ELT in Data Warehousing

While ETL has been the backbone of traditional data warehousing for decades, the rise of cloud-native platforms such as Snowflake, BigQuery, and Redshift has accelerated the adoption of ELT (Extract, Load, Transform).

And before you choose the right approach for your architecture, you must understand the distinction between the two.

ELT flips the classic ETL sequence: instead of transforming data before loading, it loads raw data directly into the warehouse and applies transformations inside it.

This shift is particularly useful in modern, cloud-based warehouses that offer scalable compute power for parallel processing.

When to Choose ETL vs. ELT:

- ETL is better suited for traditional on-premises systems, high-compliance environments, or where curated datasets must be validated before entering the warehouse.

ELT (Extract, Load, Transform) thrives in cloud-native, Big Data, and agile Power BI use cases where speed and scalability are key.

Key Tools Powering the ETL Process

The ETL ecosystem has evolved from script-heavy, manual workflows to highly automated, cloud-native platforms.

Modern tools not only handle the mechanics of extract, transform, and load but also embed intelligence. They’re often powered by generative AI to reduce engineering overhead, improve data accuracy, and accelerate time-to-insight.

1. Snowflake – Cloud Data Warehouse with Generative AI Built-in

Snowflake’s Data Cloud integrates Snowpark and Cortex AI, enabling developers to embed transformations and AI-powered insights directly in SQL or Python pipelines.

Generative AI capabilities help in automatically generating SQL queries, summarising datasets, and even suggesting transformations based on schema detection—cutting down manual data preparation effort.

Impact:

- Eliminates complex ETL orchestration for analytics-ready data.

- Speeds up BI and AI model deployment with in-warehouse transformation.

2. Databricks – Delta Live Tables for Streaming & Batch

Built on the lakehouse architecture, Databricks’ Delta Live Tables automate pipeline creation for both real-time and batch data. It supports declarative ETL—developers simply define what transformations to perform, and the platform optimises how they are executed.

Impact:

- Unified platform for big data, AI/ML, and ETL.

- Strong support for schema evolution, data quality checks, and auto-scaling.

3. Fivetran – Fully Managed Pipelines

Fivetran automates data ingestion from 400+ sources, handling schema changes seamlessly without developer intervention. It focuses on ELT for cloud data warehouses, but paired with transformation tools like dbt, it covers full ETL needs.

Impact:

- Zero-maintenance ingestion with rapid connector deployment.

- Ensures data freshness for near real-time analytics.

4. Airbyte – Open Source & Modular

Airbyte offers both managed cloud and open-source ETL, giving teams flexibility to customise pipelines while still benefiting from pre-built connectors. It’s growing in AI integration, including generative mapping recommendations for transformation logic.

Impact:

- Lower cost for teams with engineering capacity.

- Flexible deployment in hybrid or private environments.

5. Tools with Built-in Generative Capabilities

Several ETL platforms are adding natural language interfaces to simplify pipeline creation.

Consider describing your data pipeline in plain English and having the platform generate and schedule it automatically.

“Ingest Salesforce leads, standardise country codes, join with CRM orders, and push to BigQuery every hour.”

Tools like Hevo Data, Matillion, and Informatica CLAIRE are already experimenting with this approach.

Impact:

- Reduces technical barriers for non-engineering teams.

- Boosts productivity by auto-generating transformation scripts and quality checks.

Turn Your Data Warehouse into a Growth Engine with Next-Gen ETL

ETL has evolved far beyond its traditional role as a backend utility. Today, it’s the foundation of reliable analytics, timely insights, and scalable decision-making. Modern businesses cannot afford brittle pipelines or delayed transformations, especially when real-time agility can be the difference between leading a market or lagging.

Generative AI is now pushing ETL’s boundaries, enabling self-healing data pipelines, automated anomaly detection, and adaptive transformation logic that learns from historical trends.

This means fewer issues, faster onboarding of new data sources, and higher-quality outputs with less manual intervention. For organizations aiming to future-proof their data ecosystem, now is the moment to modernize.

Regardless of whether you’re building from scratch or optimizing an existing setup, pair your ETL strategy with AI-driven capabilities. It will dramatically improve productivity and data integrity.

Aegis Softtech helps enterprises design, deploy, and scale ETL workflows that meet today’s demands and stay resilient to tomorrow’s challenges.

Our experts combine deep technical skill with industry insight to deliver data warehousing solutions that are functional and strategically built to give your business a competitive edge.

FAQs

What are the 5 steps of ETL?

While ETL traditionally stands for Extract, Transform, Load, the process often expands into five steps:

- Extract: Pulling raw data from multiple sources.

- Cleanse: Removing errors, duplicates, and inconsistencies.

- Transform: Structuring, aggregating, or enriching data for analytics.

- Load: Storing processed data into a target warehouse or data lake.

- Validate: Ensuring data accuracy, completeness, and readiness for use.

What is an ETL example?

A typical ETL example is pulling sales data from a CRM (e.g., Salesforce), cleansing and enriching it with marketing data, then loading it into a warehouse like Snowflake for analysis and dashboard reporting.

Is ETL a coding language?

No, ETL is not a programming language. It’s a data integration process. However, ETL workflows can be created using code (e.g., Python, SQL, Java) or no-code/low-code ETL tools like Fivetran, Talend, or Informatica.

What is the best ETL tool?

The “best” tool depends on your data environment, budget, and scalability needs. Popular choices include:

- Fivetran and Airbyte for managed, plug-and-play pipelines.

- Databricks for advanced transformation with Delta Live Tables.

- Snowflake for cloud-native ELT and integrated generative AI capabilities.

- Informatica and Talend for enterprise-grade ETL orchestration.