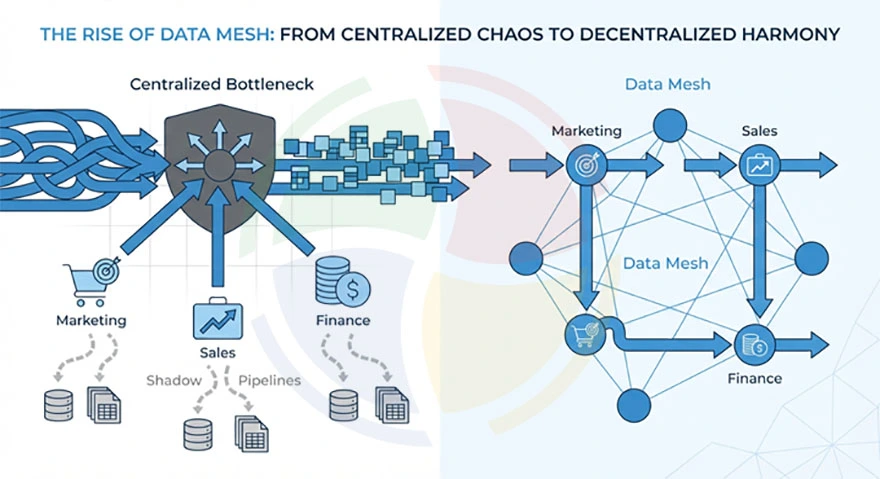

Somewhere in your organization right now, a marketing analyst is waiting three months for a dataset that should take three days. A data engineer who has never seen a customer is building a customer segmentation pipeline. A domain expert who actually understands the business logic has no say in how their data gets modeled or stored.

This is how central data platforms die.

The rise of data mesh came from companies that got tired of watching their data teams collapse under their own centralization. So they did something radically simple: give data ownership back to the people who know what it means. For example, marketing now owns marketing data, and supply chain owns logistics data.

In this blog post, we’ll break down why data mesh emerged, how it differs from data lakes and data fabric, and much more. Let’s get started!

Key Takeaways

- The problem: Centralized data lakes and warehouses created bottlenecks, slow delivery, shadow pipelines, and governance gaps that worsened as organizations scaled.

- The solution: Data mesh decentralizes ownership to domain teams while providing shared infrastructure and federated governance to maintain interoperability.

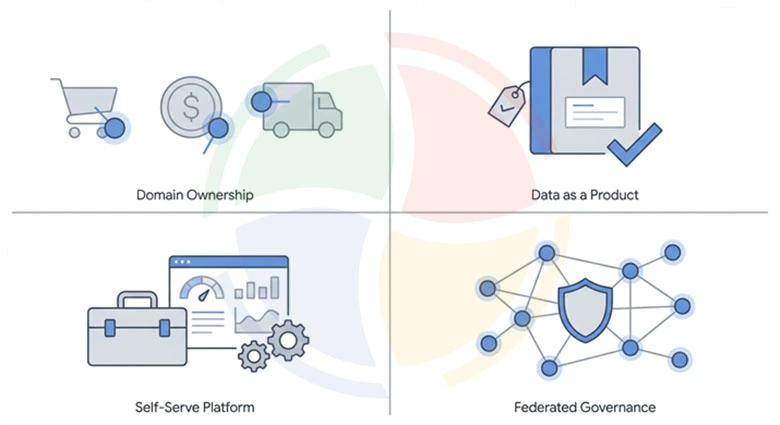

- The four pillars: Domain-oriented ownership, data as a product, self-serve platform, and federated governance work together to make decentralization sustainable.

- The right fit: Large, domain-rich enterprises with mature engineering cultures benefit most, while startups and small teams with limited data complexity should avoid the overhead.

- The implementation path: Start by defining domain boundaries, and move on to establishing product guidelines, building a self-serve platform layer, and implementing automated governance that scales without bottlenecks.

What Led to the Rise of Data Mesh?

Centralized data architectures worked well when data volumes were manageable and organizational structures were simpler. But as enterprises scaled, these models began to buckle under their own weight.

Several patterns emerged that signaled the breaking point of centralized approaches:

- Backlogs and bottlenecks in central data teams: Every data request flowed through the same team, causing queues to grow longer and prioritization to become political.

- Slow analytics delivery: The lag between business questions and data-driven answers widened significantly. Central teams lacked domain context, which led to miscommunication, rework, and widespread frustration across departments.

- Shadow data pipelines inside departments: Business units grew frustrated by wait times and started building their own pipelines. Marketing had its own data warehouse, sales maintained separate spreadsheets, and finance used a different set of numbers entirely.

- Governance gaps and duplication of effort: Without coordination, organizations ended up with multiple versions of the same metrics. Customer counts varied across reports, revenue definitions diverged, and trust in data eroded completely.

These big data integration challenges became the norm for organizations operating at scale. The central team model, designed to create order, produced the opposite outcome. The rise of data mesh emerged as a direct response to this organizational dysfunction.

What is Data Mesh?

Data mesh is a sociotechnical approach to enterprise data strategy that decentralizes data ownership to domain teams while establishing a shared infrastructure and governance framework.

Introduced by Zhamak Dehghani in 2019, data mesh treats data as a product and assigns accountability to the teams that generate and understand it best. The architecture moves away from centralized data lakes controlled by a single team, and toward a distributed model where domains publish, maintain, and govern their own data products.

Core Principles of Data Mesh

Data mesh architecture rests on four foundational principles that work together to enable decentralized, scalable data management.

1. Domain-Oriented Data Ownership

In traditional architectures, a central IT or data engineering team owns all data assets. In a data mesh, domain-oriented data ownership shifts accountability to the teams that produce the data and understand its business context.

The sales domain owns sales data. The logistics domain owns shipment data. The finance domain owns transaction data. Each domain team becomes responsible for the quality, availability, and discoverability of the data they generate.

2. Data as a Product

The data as a product principle treats datasets with the same rigor applied to customer-facing software products. Data products have clear ownership, defined consumers, service level agreements, documentation, and quality metrics.

This means that every dataset must meet standards for discoverability, addressability, trustworthiness, and interoperability. Data owners think about their consumers and design data products that are easy to find, understand, and use.

3. Self-Serve Data Platform

A self-serve data platform provides domain teams with the infrastructure and tooling they need to build, deploy, and manage data products without deep platform expertise.

This platform abstracts away the complexity of storage, compute, security, and governance. Domain teams focus on their data logic and business rules while the platform handles provisioning, scaling, monitoring, and compliance enforcement.

The goal is to reduce the cognitive load on domain teams so they can deliver value faster. Without a self-serve platform, decentralization creates chaos. With it, decentralization creates agility.

4. Federated Governance

Federated data governance balances autonomy with interoperability. Domains operate independently, but they follow shared standards that ensure data can be discovered, combined, and trusted across organizational boundaries.

This includes standardized metadata schemas, common data quality metrics, consistent security policies, and unified access controls. Governance becomes a collaborative function where domains contribute to and comply with shared policies.

Data Mesh vs. Data Lake vs. Data Fabric

Understanding the differences between these architectures helps clarify when each approach makes sense. The following comparison highlights the key distinctions:

| Aspect | Data Lake | Data Fabric | Data Mesh |

| Architecture Style | Centralized repository | Unified integration layer | Distributed, domain-oriented |

| Data Ownership | Central IT team | Central IT team | Domain teams |

| Governance Model | Governance Model | Centralized with automation | Federated |

| Primary Focus | Storage and cost efficiency | Integration and connectivity | Organizational scalability |

| Technology Emphasis | Storage infrastructure | Metadata and AI-driven integration | Platform and product thinking |

| Scalability Approach | Scale storage capacity | Scale integration points | Scale through domain autonomy |

Key Drivers for the Rise of Data Mesh

The rise of data mesh did not happen in a vacuum. Specific organizational and technological pressures pushed enterprises toward this paradigm.

- Scalability issues: Central data teams cannot scale linearly with organizational growth

- Need for agility: Waiting weeks for new datasets is no longer acceptable in fast-moving markets

- Democratization of data: Centralized gatekeeping creates friction that slows decision-making

- Product thinking: Data requires the same accountability and consumer focus applied to software products

- Broken down silos: Central teams become single points of failure that disengage the rest of the organization

Practical Use Cases of Data Mesh

Organizations across industries have adopted data mesh to address their specific challenges.

Retail

Large retailers operate complex supply chains with distinct domains for product catalog, pricing, inventory, customer experience, and logistics.

Each domain generates and consumes data at different cadences. A data mesh allows the pricing team to own and publish pricing data products while the inventory team manages stock levels independently. Cross-domain analytics happen through well-defined interfaces.

Banking and Financial Services

Financial institutions manage risk, fraud, customer, and transaction data across multiple business lines. Regulatory requirements demand clear data lineage and accountability.A data mesh structure assigns risk data ownership to risk teams, fraud data to fraud teams, and customer data to customer analytics teams. Federated data governance ensures compliance without centralizing control.

Healthcare

Patient data, clinical data, operational data, and research data each have distinct privacy requirements and domain expertise.

A data mesh enables clinical teams to own patient data products with embedded privacy controls while research teams access de-identified datasets through governed interfaces. This structure supports both compliance and innovation.

Manufacturing

Manufacturing environments generate massive volumes of IoT telemetry, sensor data, and operational metrics. Plant-level teams understand their equipment and processes best.

A data mesh allows each plant to own its operational data products, while corporate teams consume aggregated metrics for enterprise-wide visibility.

How Data Mesh is Implemented in Organizations

Implementing data mesh requires deliberate organizational and technical changes. The following steps outline a practical approach.

Step #1: Identify and Define Data Domains

Most organizations already have natural domain boundaries; they exist in how teams communicate, how software is structured, and how business units operate. The goal is to make these boundaries explicit and assign data ownership accordingly.

Start by mapping existing team structures and asking who generates each critical dataset:

- Sales teams own pipeline and revenue data because they understand deal stages and conversion logic

- Product teams own usage and feature data because they define what gets tracked

- Finance teams own transaction and billing data because they set the accounting rules

- Operations teams own fulfillment and logistics data because they manage the underlying processes

Step #2: Establish Data as a Product Guidelines

Treating data as a product means giving domain teams clear expectations for what they must deliver. Without these guidelines, decentralization leads to inconsistent quality and unusable outputs.

Every data product should ship with a standard set of artifacts that consumers can rely on:

- A schema definition with documentation explaining what each field means and how it should be used

- Quality checks that run automatically and alert owners when data falls outside expected ranges

- Freshness commitments that tell consumers how current the data will be and when it refreshes

- An owner contact who can answer questions and resolve issues within a defined response window

Step #3: Build the Self-Serve Data Platform Layer

Domain teams should not need to become infrastructure experts to publish data products. The self-serve data platform removes this burden by providing pre-built capabilities that teams can consume through simple interfaces.

Platform teams should prioritize the capabilities that domain teams request most frequently:

- Storage and compute environments that teams can provision without filing tickets

- Pipeline templates that handle common ingestion patterns with minimal configuration

- A data catalog where teams register products and consumers discover what exists

- Built-in governance controls that enforce policies automatically during deployment

Step #4: Implement Federated Governance

Federated data governance prevents decentralization from becoming chaos. Domains operate independently, but they follow shared rules that ensure interoperability. The key is automating enforcement so that governance scales without creating new bottlenecks:

- Metadata standards that require every product to include lineage, ownership, and classification tags

- Automated policy checks that block deployment if security or quality requirements are not met

- Cross-domain working groups that meet regularly to evolve standards based on real-world friction

- A central registry that tracks all active data products and their compliance status

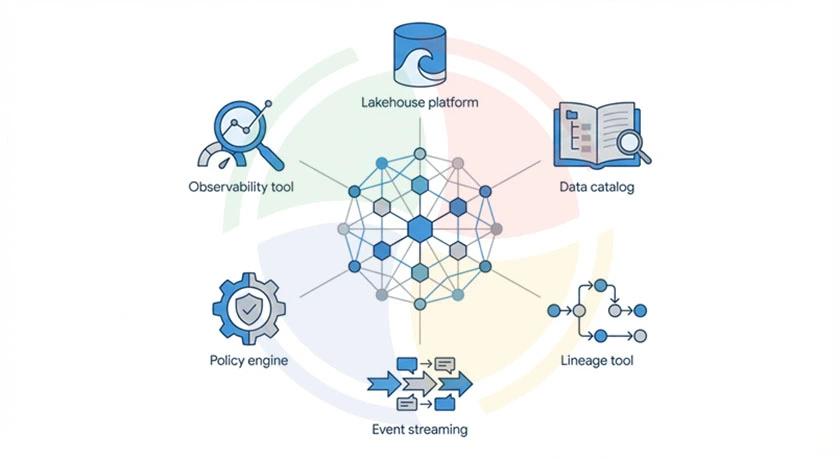

Technology Stack That Enables Data Mesh

Building a data mesh requires a technology foundation that supports decentralized ownership with shared infrastructure. Most implementations rely on the following components:

- Lakehouse platforms like Databricks, Snowflake, or Delta Lake for unified storage

- Data catalogs like Atlan, Alation, or DataHub for discovery and documentation

- Lineage tools like OpenLineage or Marquez for tracking data flow

- Event streaming, like Kafka or Kinesis, for real-time data exchange

- Policy engines like Open Policy Agent for automated governance enforcement

- Observability tools like Monte Carlo or Datadog for monitoring data health

Organizations looking to modernize their data infrastructure benefit from expert guidance in selecting and implementing these technologies. Aegis Softtech helps enterprises build foundational capabilities through data warehousing services that support data mesh adoption at scale.

When Data Mesh is the Right Choice (And When It is Not)

You can prevent costly misalignment by understanding when data mesh fits and when it does not make sense. Here’s a breakdown:

| Data Mesh is Right For | Data Mesh is Not Ideal For |

| Large enterprises with multiple distinct business domains | Startups with small teams and limited data complexity |

| Organizations with autonomous business units that need independent velocity | Companies with highly centralized decision-making structures |

| Enterprises struggling with central data team bottlenecks | Organizations lacking clear domain ownership or accountability |

| Companies with mature engineering cultures and product thinking | Teams without the skills or resources to own data products |

| Industries requiring clear data lineage and distributed accountability | Companies with low data volumes that do not justify the overhead |

Initiate Data Mesh Adoption With Aegis Softtech

Transitioning to a data mesh architecture requires strategic planning, technical expertise, and organizational change management. We, at Aegis Softtech, partner with enterprises to navigate this transformation successfully.

Our team helps organizations assess readiness, define domain boundaries, establish governance frameworks, and design platform architectures that support decentralized ownership.

Through our data warehouse consulting services, we bring experience from implementing modern data architecture across industries and organizational contexts.

Moreover, if you wish to augment your team during the transition, we offer access to skilled data warehouse developers and architects who can accelerate implementation.

Your team also receives essential post-development training and knowledge transfer for sustainable business growth.

Contact Aegis Softtech today to discuss how we can help you implement a strategy tailored to your business needs.

FAQs

1. What is the history of data mesh?

Zhamak Dehghani introduced data mesh in 2019 while working at ThoughtWorks. The concept emerged from her observations of how large enterprises struggled with centralized data architectures that could not scale organizationally.

2. What skills are needed for data mesh?

Teams need data engineering skills to build and maintain data products, DevOps expertise to manage platform infrastructure, governance knowledge to implement policy automation, and product management capabilities to apply product thinking to data assets. Leadership must also understand how to create accountability structures that support distributed ownership.

3. How is mesh data stored?

Data lives in shared infrastructure, typically a lakehouse platform, while domain teams maintain ownership and access control for their specific products. The physical storage remains centralized for efficiency, but logical ownership is distributed to the domains that understand the data best.

4. What problems does data mesh solve?

Data mesh eliminates central team bottlenecks that slow down analytics delivery. It also improves data quality by assigning accountability to domain experts, reduces governance gaps, and breaks down organizational silos that lead to duplicated effort and inconsistent metrics.