At 9:02 AM, your dashboard says inventory is healthy.

At 9:17 AM, TikTok makes your product go viral.

At 9:43 AM, you’re oversold.

Your data? Still calm. Still confident. Still wrong.

That’s batch analytics working (rather failing) in a real-time world.

A real-time data warehouse (RTDW) flips the model. Instead of refreshing data overnight, it ingests events continuously and delivers sub-second to sub-minute query freshness. The moment something happens, be it a click, purchase, sensor spike, it’s queryable.

In this blog, we’ll break down RTDW architecture, implementation strategy, leading solutions, and industry use cases that make real-time data warehouses a competitive necessity.

A real-time data warehouse continuously ingests, processes, and makes data queryable within milliseconds to minutes using CDC and streaming pipelines

Core Components- Change Data Capture (CDC)

- Streaming ingestion (Kafka, Kinesis)

- Columnar storage

- Real-time query engines

- ClickHouse

- Snowflake (Snowpipe)

- BigQuery (streaming inserts)

- Amazon Redshift (streaming ingestion)

- Databricks

- Apache Pinot

Organizations needing operational analytics, fraud detection, live dashboards, or customer-facing analytics

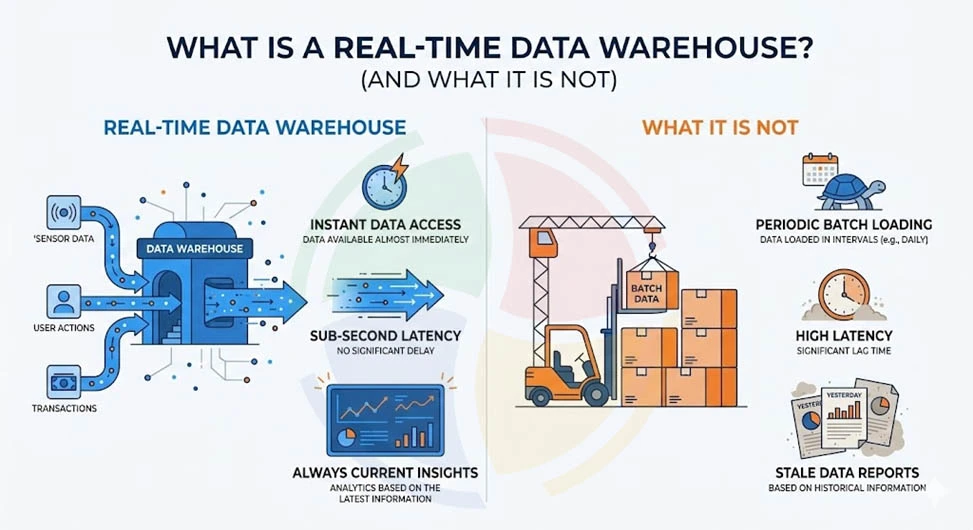

What is Real Time Data Warehouse? (And What It is Not)

A real-time data warehouse (RTDW) is a data warehouse that supports analytics on both streaming (“hot”) data and historical (“cold”) data at the same time.

Hot data is live, continuously generated information processed in near real time. For example, a credit card transaction is being analyzed instantly for fraud before approval.

Cold data is historical, stored data used for long-term analysis and trend evaluation. That might mean analyzing three years of past transactions to detect spending patterns or risk behavior.

Essentially, a Real-Time Data Warehouse (RTDW) is an analytical system built to ingest live data, such as events, transactions, and IoT signals. This system merges live data with years of historical records and allows both hot and cold queries within a unified engine.

What a Real-Time Data Warehouse is NOT:

- Not a message queue (e.g., Kafka-style event buffering)

- Not a data lake storing raw files without structured analytics

- Not a streaming-only system that can’t join with historical data

- Not a standard OLTP database built for transactions, not analytics

In short, a real-time data warehouse is built for analytics at speed and at scale, where streaming intelligence meets deep historical context. That’s what separates true RTDW architecture from everything else pretending to be “real-time.”

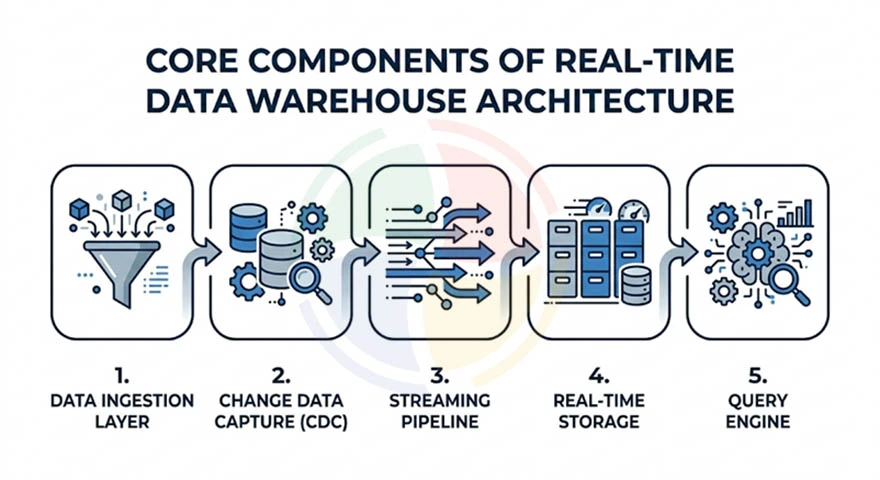

Core Components of Real-Time Data Warehouse Architecture

If you strip it down, a real-time data warehouse is about one thing: moving data from event to insight in seconds, not hours.

To make that happen reliably at scale, you need a tightly integrated architecture that consists of the following components:

Data Ingestion Layer

This is your front door. A real-time data warehouse starts by capturing data as it’s generated.

You’ll typically see:

- Direct connections from IoT devices or operational systems

- APIs pulling transactions from payment gateways or SaaS platforms

- A message bus like Apache Kafka, AWS Kinesis, or Redpanda to stream high-volume events

The goal is low-latency ingestion with horizontal scalability. If ingestion lags, your “real-time” promise collapses.

Change Data Capture (CDC)

CDC keeps your warehouse synchronized with operational databases without heavy batch loads.

There are two approaches:

- Log-based CDC: captures changes directly from database logs.

- Query-based CDC: polls tables for updates.

Streaming Pipeline

This is where data gets shaped mid-flight. A strong streaming layer handles:

- Serialization (Avro, Protobuf, JSON)

- Reliable transport across distributed systems

- Exactly-once delivery guarantees

- Schema evolution without breaking pipelines

Without this layer, you risk duplicates, data loss, or corrupted analytics.

Real-Time Storage

This is where streaming data is stored in columnar databases and optimized for fast aggregations and time-series analytics. It enables high-speed querying across massive datasets.

Query Engine

The query engine powers sub-second OLAP queries with high concurrency, supporting thousands to hundreds of thousands of queries per second. It delivers instant dashboards, alerts, and operational insights.

Most teams underestimate schema evolution in streaming pipelines. When your source database adds a column mid-stream, your CDC pipeline either handles it gracefully or breaks production. — Lead Data Architect, Aegis Softtech

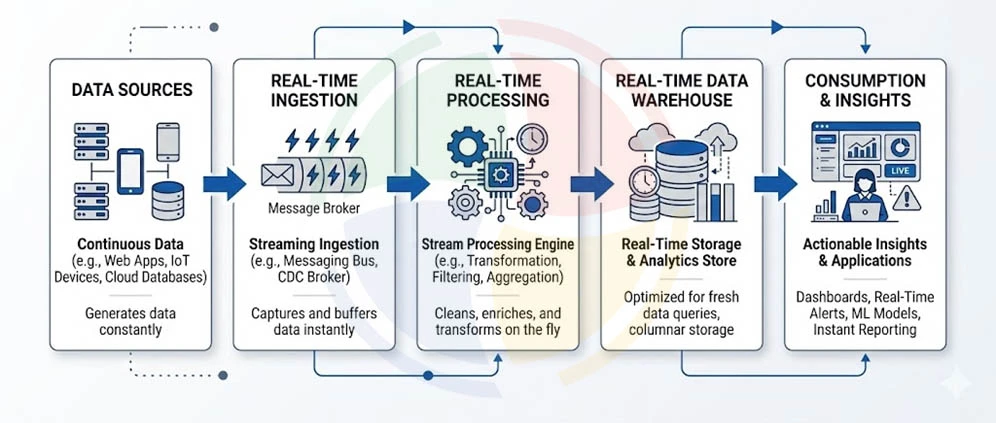

How Does a Real-Time Data Warehouse Architecture Work?

A real-time data warehouse doesn’t wait for nightly batch jobs. It processes events as they happen, so insights reflect what’s happening right now, not what happened yesterday.

Think of a real-time data warehouse as a relay race where every baton pass happens in milliseconds. Data doesn’t sit in staging tables. It doesn’t queue for ETL windows. It flows continuously from source systems to analytics dashboards.

Here’s how it works in real-time:

Layer 1: Something Changes at the Source

It all starts with an event. Maybe a customer places an order. Or payment clears. An IoT sensor records a temperature spike. That event lives inside your operational database, such as PostgreSQL, MySQL, or MongoDB; whatever runs your transactions.

In traditional architectures, the data warehouse would only “notice” this change during a scheduled batch job, maybe every 6 hours. That delay creates blind spots in fraud detection, inventory tracking, and customer analytics.

In a real-time data warehouse architecture, the system is always listening. The moment a row is inserted, updated, or deleted, it’s captured.

Layer 2: Change Data Capture (CDC) Detects the Event

Change Data Capture monitors the database transaction log. It’s the same log used internally for durability and crash recovery. Instead of scanning entire tables asking, “What changed since last night?” it reads the log stream directly.

It extracts only what matters: inserts, updates, and deletes, in real time.

Each event is enriched with metadata:

- Timestamp

- Operation type

- Before-and-after values.

All of it makes downstream processing accurate and traceable. This efficiency is what separates modern real-time data warehouse systems from legacy batch ETL pipelines.

If you’re evaluating data warehouse consulting partners, this CDC layer is often where architectural quality makes or breaks performance.

Layer 3: Streaming Pipeline Transports the Event

Once captured, the event flows into a streaming platform like Kafka, Kinesis, or Redpanda.

Consider this as a durable, ordered buffer. It decouples producers (databases) from consumers (warehouse, alerts, caches). The pipeline handles serialization, guarantees delivery (exactly-once or at-least-once), and manages schema evolution when source tables change.

Multiple systems can subscribe simultaneously. One consumer feeds the warehouse. Another triggers real-time alerts. A third updates a recommendation engine.

This is where modern data warehouse solutions gain elasticity and fault tolerance.

Layer 4: Real-Time Storage Ingests Incrementally

Now the warehouse, be it Snowflake, ClickHouse, or BigQuery, ingests events incrementally.

Columnar storage formats optimize analytical performance, and background processes update indexes efficiently. Within seconds (or a few minutes), that original event is fully queryable.

This incremental ingestion model is what enables scalable, always-fresh analytics.

Layer 5: Query Engine Serves Sub-Second Analytics

Finally, the query engine does its job.

Dashboards, applications, or analysts send queries. OLAP engines compute aggregations in sub-second to single-digit seconds. High-concurrency support, often thousands to hundreds of thousands of queries per second, enables customer-facing analytics, not just internal BI.

That’s the endgame: operational intelligence powered by a real-time data warehouse.

The magic of RTDW is the seamless handoff between layers. Break one link, and you're back to batch latency with streaming complexity. — Lead Data Architect, Aegis Softtech

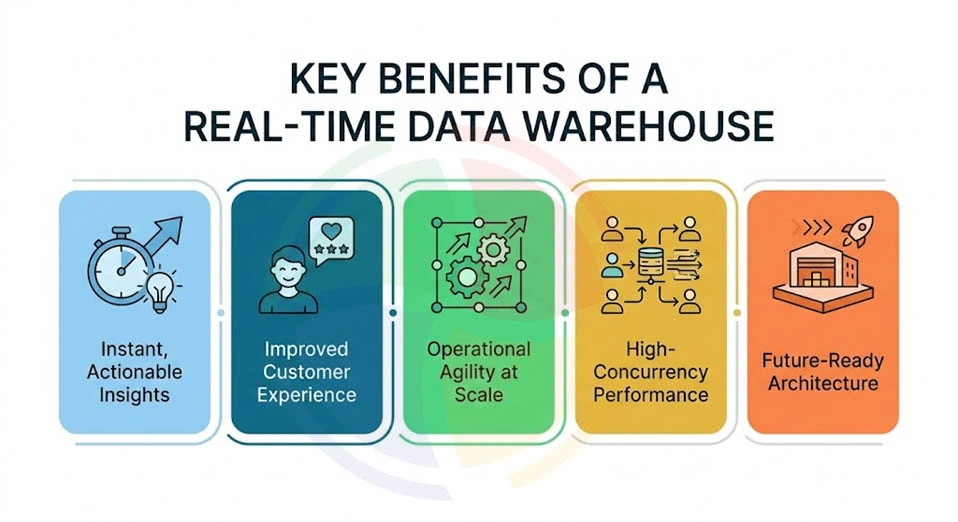

Key Benefits of a Real-Time Data Warehouse

A real-time data warehouse is a competitive advantage engine. Here are its key benefits:

- Instant, Actionable Insights

Analytics reflect live operational data, not last night’s batch. That means fraud detection, inventory optimization, and customer personalization happen in the moment where impact actually lives.

- Improved Customer Experience

With fresh data powering dashboards and applications, businesses can deliver dynamic pricing, real-time recommendations, and responsive support.

- Operational Agility at Scale

Real-time ingestion and incremental processing eliminate heavy rebuilds. Organizations can adapt to market shifts without overhauling pipelines; that’s something often optimized through expert big data consulting.

- High-Concurrency Performance

Modern OLAP engines handle thousands of simultaneous queries, supporting both internal BI and customer-facing analytics without latency spikes.

- Future-Ready Architecture

Streaming-native systems integrate seamlessly with AI, ML, and event-driven applications, positioning your enterprise for continuous innovation.

Real-Time Data Warehouse vs. Traditional Data Warehouse: Key Differences

If traditional data warehouses are built for hindsight, real-time data warehouses are built for foresight. Here are the key differences between the two:

| Dimension | Traditional Data Warehouse | Real-Time Data Warehouse |

| Data Freshness | 6-24 hours (batch ETL) | Seconds to minutes (CDC + streaming) |

| Query Latency | Minutes to hours | Sub-second to seconds |

| Concurrency | 100s of QPS (internal analysts) | 1,000-100,000+ QPS (user-facing apps) |

| Architecture | ETL → Staging → Load | CDC → Stream → Incremental Load |

| Use Case | Historical reporting, trend analysis | Operational intelligence, fraud detection, live dashboards |

| Cost Model | Pay for scheduled compute | Pay for continuous computing |

The shift from batch to streaming isn't just about speed—it's about enabling decisions that weren't possible before. You can't catch fraud in yesterday's data. — Senior Data Engineer, Aegis Softtech

Best Real-Time Data Warehouse Solutions

Choosing the best real-time data warehouse solutions depends on scale, latency needs, and ecosystem fit.

Enterprise Cloud Platforms

Enterprise-grade platforms combine streaming, storage, and analytics under one roof. But they’re not all the same. Here’s a look at the top cloud data warehouse solutions:

| Platform | Architecture Focus | Real-Time Ingestion | Query Performance | Best Fit |

| Amazon Web Services (AWS) | Broad IaaS + PaaS ecosystem | Kinesis, MSK, DMS | High-performance with Redshift & Aurora | Large-scale digital enterprises |

| Microsoft Azure | Integrated enterprise cloud | Event Hubs, Stream Analytics | Synapse + Fabric analytics engine | Regulated industries |

| Google Cloud Platform (GCP) | Data + AI-first cloud | Pub/Sub streaming | BigQuery distributed OLAP | Data-driven tech companies |

| Snowflake Data Cloud | Cloud-agnostic data platform | Snowpipe Streaming | High-concurrency OLAP | Enterprise analytics modernization |

| Oracle Cloud Infrastructure (OCI) | Enterprise + database-optimized | Streaming service | Autonomous DB performance | Database-heavy enterprises |

Purpose-Built Real-Time Analytics Databases

These platforms are engineered specifically for streaming + low-latency analytics at scale.

If your workload demands millisecond ingestion and sub-second queries, these systems are built for that pressure.

| Tool | Architecture Focus | Real-Time Ingestion | Query Performance | Best Fit |

| ClickHouse | Columnar OLAP engine | Native streaming integrations | Sub-second analytics | Ad tech, observability |

| Apache Druid | Time-series + OLAP hybrid | Native stream ingestion | Fast aggregations on event data | Event analytics |

| Rockset | Search + analytics engine | Continuous ingestion | Low-latency indexed queries | Customer-facing apps |

| SingleStore | HTAP (OLTP + OLAP) | Real-time pipelines | High-speed mixed workloads | Fintech, SaaS |

| Apache Pinot | Real-time OLAP | Streaming-first design | Millisecond-level QPS | Product analytics |

Best Real-Time Data Warehouse for Startups

Startups need managed, flexible systems that scale without hiring a DevOps army. Early-stage teams should optimize for simplicity and elasticity. That said, the best options for a real-time data warehouse for startups include:

| Tool | Architecture Focus | Real-Time Ingestion | Query Performance | Best Fit |

| Snowflake | Cloud-native data cloud | Snowpipe + streaming support | High-performance OLAP | SaaS analytics |

| BigQuery | Serverless warehouse | Streaming inserts | Fast distributed queries | Growth startups |

| ClickHouse Cloud | Managed columnar OLAP | Real-time ingestion support | Sub-second performance | Data-heavy apps |

| SingleStore | HTAP database | Native pipelines | Real-time mixed queries | Product-led SaaS |

| Rockset | Index-on-ingest system | Continuous ingestion | Low-latency search + SQL | API-first apps |

Best Real-Time Data Warehouse for Tech

Tech enterprises need concurrency, workload isolation, and embedded analytics capabilities. The best options for a real-time data warehouse for tech companies include:

| Tool | Architecture Focus | Real-Time Ingestion | Query Performance | Best Fit |

| Snowflake | Enterprise data cloud | Native streaming + tasks | High concurrency OLAP | Enterprise SaaS |

| ClickHouse | High-speed OLAP | Kafka integrations | Ultra-fast aggregations | High-QPS apps |

| Apache Pinot | Real-time OLAP engine | Streaming-native | Millisecond response time | User analytics |

| SingleStore | Distributed SQL HTAP | Built-in pipelines | Low-latency mixed workloads | Fintech platforms |

| BigQuery | Serverless warehouse | Streaming API | Fast analytical workloads | Large-scale analytics |

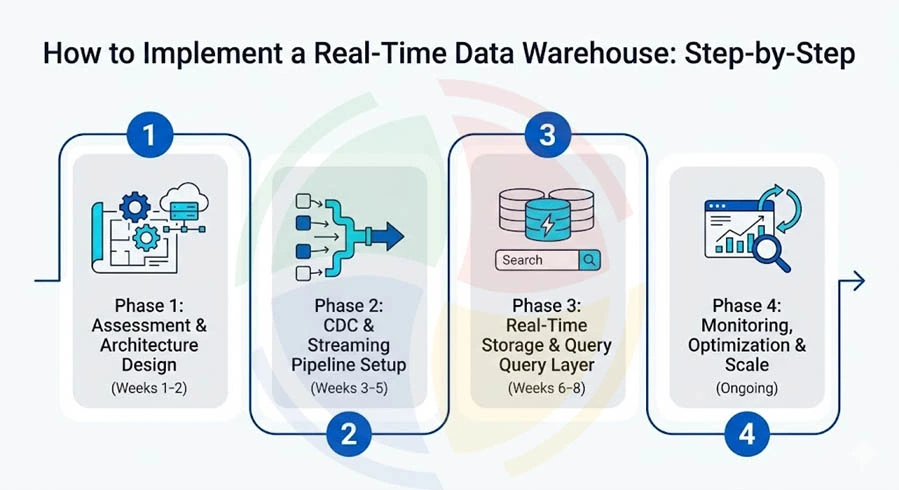

How to Implement a Real-Time Data Warehouse: Step-by-Step

So you’ve decided you need a real-time data warehouse. Good move.

Now, let’s break down how you can implement RTDW into phases you can actually execute without boiling the ocean:

Phase 1: Assessment & Architecture Design (Weeks 1-2)

Define business impact first. Technology comes second.

Before touching pipelines or tools, clarify why real-time matters. For example, if you’re in fintech, fraud detection may require sub-second alerts. In retail, live inventory visibility might need updates every 30 seconds. These distinctions define architecture.

Next, audit your source systems for CDC compatibility. If you’re running PostgreSQL with logical replication, you’re in good shape for log-based CDC. If you’re relying on query-based polling, expect higher latency.

Then define latency tiers:

- Sub-second (fraud)

- Sub-minute (ride allocation)

- Near-real-time (ops dashboards).

Only after that should you select your architecture: streaming-first for mission-critical use cases, or hybrid batch + streaming if you’re modernizing gradually.

Start with one high-impact use case; don't try to stream everything. A single successful pilot builds organizational confidence faster than a grand architecture plan. — VP of Data Engineering, Aegis Softtech

Phase 2: CDC & Streaming Pipeline Setup (Weeks 3-5)

Once your architecture is defined, enable CDC at the database level. PostgreSQL logical replication or MySQL binlog are common entry points.

From there, deploy a streaming platform like Kafka, Kinesis, or Redpanda to move events in real time.

However, streaming without governance is fragile. That’s why implementing a schema registry early matters. Imagine adding a new “discount_type” column in production without schema management; downstream jobs could fail silently.

At the same time, configure exactly-once delivery guarantees. If your internal team lacks event-driven architecture expertise, you can choose to hire data warehouse developers who’ve built production-grade streaming systems before.

💡 Pro Tip: Always test CDC pipelines with live schema evolution scenarios before production. Add, drop, and rename fields intentionally to validate resilience.

Phase 3: Real-Time Storage & Query Layer (Weeks 6–8)

Now that events are flowing, you need a warehouse that can ingest and serve data concurrently. Platforms like Snowflake, ClickHouse, or BigQuery support near real-time ingestion with scalable compute separation.

From there, implement incremental materialization. For example, instead of recomputing a daily sales table, process only new transactions every few seconds. This dramatically reduces compute load.

Then optimize indexing based on real user behavior. If 70% of queries filter by customer_id, index accordingly. Pair that with smart partitioning (e.g., by date or region) and data tiering to control storage costs.

💡 Pro Tip: Stress-test concurrency. A dashboard that runs smoothly with five users may fail when 200 sales managers log in on Monday morning.

Phase 4: Monitoring, Optimization & Scale (Ongoing)

Once live, your most critical metric is CDC lag, which is how many seconds behind the source system you are. If lag grows from 5 seconds to 2 minutes, something’s wrong.

Next, monitor query latency percentiles (p50, p95, p99). For example, if your p99 spikes during flash sales, you may need auto-scaling or query optimization.

Equally important, implement automated alerts for pipeline failures and data quality drift.

Top Use Cases for Real-Time Data Warehouses

A real-time data warehouse shifts analytics from passive reporting to active decision-making. Instead of asking, “What happened yesterday?” you’re asking, “What’s happening right now, and what should we do about it?”

Below are the highest-impact use cases across industries—each powered by streaming ingestion, low-latency queries, and continuous transformation.

Software as a Service (SaaS)

A modern real-time data warehouse for SaaS enables product, growth, and finance teams to operate from the same up-to-the-second dataset. Key use cases include:

- Product analytics: Real-time feature usage tracking to monitor adoption and identify friction instantly.

- Customer health scoring: Churn prediction using live engagement and behavioral signals.

- Usage-based billing: Accurate, real-time metering for consumption pricing models.

For early-stage companies, the right real-time data warehouse for startups creates faster feedback loops and sharper iteration cycles.

E-Commerce

In e-commerce, revenue decisions happen in milliseconds. The best real-time data warehouse solutions help with:

- Fraud detection: Millisecond transaction scoring before payment authorization.

- Dynamic pricing: Inventory-aware price optimization during demand fluctuations.

- Personalization: Real-time recommendation engines driven by live browsing behavior.

- Operational dashboards: Live order tracking and fulfillment visibility.

💡 Pro Tip: For fraud detection, target sub-200ms query latency. Any slower, and the transaction would clear before you flagged it.

Financial Services & IoT

A robust real-time data warehouse for tech companies handling financial or IoT workloads ensures high-frequency insights without compromising governance.

Key use cases include:

- Risk monitoring: Real-time exposure and position tracking across portfolios.

- Anomaly detection: Identification of irregular patterns in sensor or transaction streams.

- Compliance reporting: Continuous audit trails using CDC (Change Data Capture).

Marketing & Customer Experience

Personalization only works if insights are delivered in-session, not post-session. Here’s where RTDW delivers in the vertical:

- Campaign optimization: Real-time performance tracking and budget reallocation.

- Audience segmentation: Live behavioral grouping for triggered messaging.

- Attribution modeling: Continuously updated multi-touch conversion tracking.

Operations & Supply Chain

Key use cases in operations and supply chain include:

- Inventory reconciliation: Continuous stock level synchronization across locations.

- Shipment tracking: Predictive delay alerts based on live logistics data.

- Automated replenishment: Trigger-based restocking using streaming demand signals.

Accelerate Your Real-Time Data Strategy with Aegis Softtech

A real-time data warehouse closes the gap between business events and actionable insights. No more pricing decisions based on stale data. No more fraud alerts after the transaction clears. Just live, decision-ready intelligence.

But speed without the right architecture creates bottlenecks.

From architecture design and CDC implementation to streaming pipelines and continuous optimization, Aegis Softtech delivers end-to-end RTDW solutions built for scale and governance.

Our key capabilities include:

- Data Warehouse Services: Architecture design, implementation, and seamless migration

- Big Data Services: Streaming pipelines with Kafka and Spark

- Snowflake Services: Snowpipe, Streams & Tasks, performance optimization

- Azure Synapse Analytics: Real-time analytics and Synapse Link integration

Ready to move from batch to real-time?

FAQs

1. What is a real-time data warehouse?

A real-time data warehouse continuously ingests data using CDC and streaming pipelines, making it queryable within seconds to minutes. Unlike traditional warehouses that refresh every 6-24 hours, RTDWs deliver sub-minute data freshness for operational analytics.

2. What is an example of a real-time data warehouse?

A common example is an e-commerce platform using a real-time data warehouse architecture to power live fraud detection and dynamic pricing. As transactions stream in, the system updates dashboards, runs risk models, and triggers alerts within milliseconds, without relying on overnight batch processing.

3. How does CDC enable real-time data warehousing?

CDC (Change Data Capture) tracks inserts, updates, and deletes from source databases by reading transaction logs. This incremental approach streams only changes rather than full table extracts, reducing latency and source system load.

4. Which AI has real-time data?

AI systems connected to streaming pipelines, such as fraud detection engines, recommendation systems, and IoT monitoring platforms, operate on real-time inputs. When built on a modern real-time data warehouse architecture, these AI models continuously ingest fresh data, ensuring decisions are based on the latest events, not stale snapshots.

5. What industries benefit most from real-time data warehouses?

Financial services (fraud detection, risk monitoring), e-commerce (inventory, personalization), SaaS (product analytics, usage billing), and IoT (sensor monitoring, predictive maintenance) see the highest ROI from real-time architectures.

6. How is a real-time data warehouse different from a data lake?

A real-time data warehouse optimizes for structured, queryable analytics with sub-second latency. Data lakes store raw, unstructured data at a lower cost but require additional processing for analytics. Modern lakehouses (Databricks, Delta Lake) bridge both approaches.