Say, a team launches a new analytics platform, pipelines run, dashboards appear, and everything looks fine for the first few months. Then the problems start:

- Queries take longer

- Two departments report different numbers for the same KPI

- A new source system breaks five downstream reports

This situation almost always traces back to poor warehouse design.

Data warehouse design best practices are the architecture principles, modeling standards, pipeline patterns, and governance rules that keep analytics environments reliable and scalable.

Follow them early, and your warehouse becomes a durable analytics platform. Ignore them, and you will probably eventually rebuild everything.

In this blog, we have brought you 12 practical best practices across architecture, schema modeling, pipelines, performance, and governance. So, you can now scale your warehouse without it turning into a data swamp.

Let’s begin!

Key Takeaways

Data warehouse design best practices are the repeatable rules for structuring layers, schemas, pipelines, and governance that keep analytical systems performant and trustworthy.

Key Practices:- Implement a layered architecture (raw → staging → curated)

- Separate storage and compute for elastic scalability

- Design for multi-source integration from day one

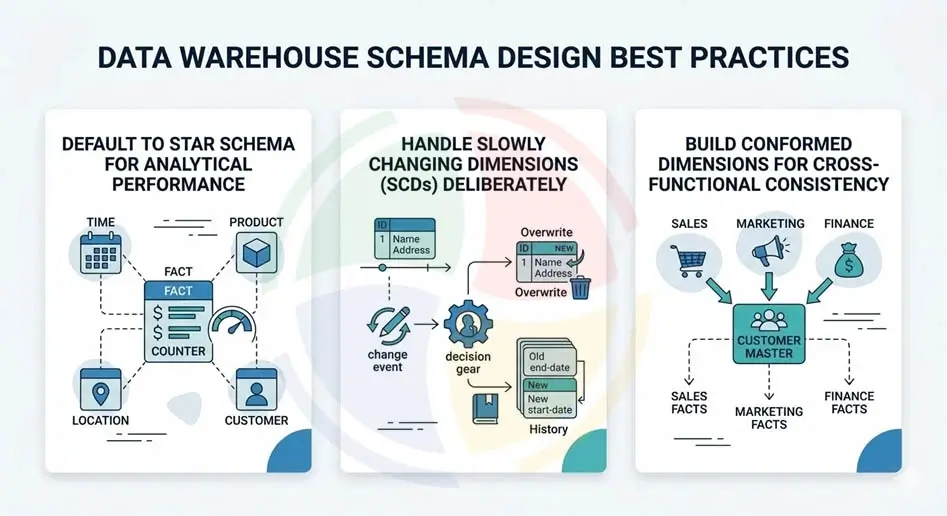

- Default to Star schema for analytical performance

- Handle slowly changing dimensions (SCDs) deliberately

- Build conformed dimensions for cross-functional consistency

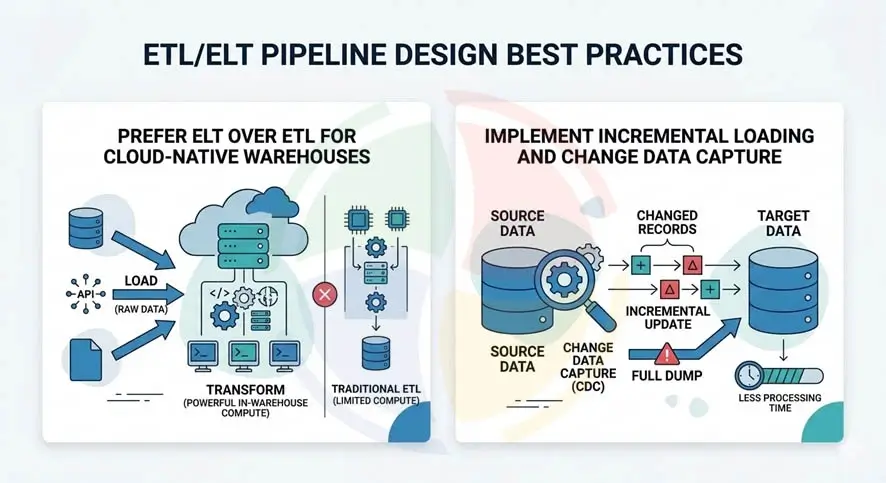

- Prefer ELT over ETL for cloud-native warehouses

- Implement incremental loading and change data capture

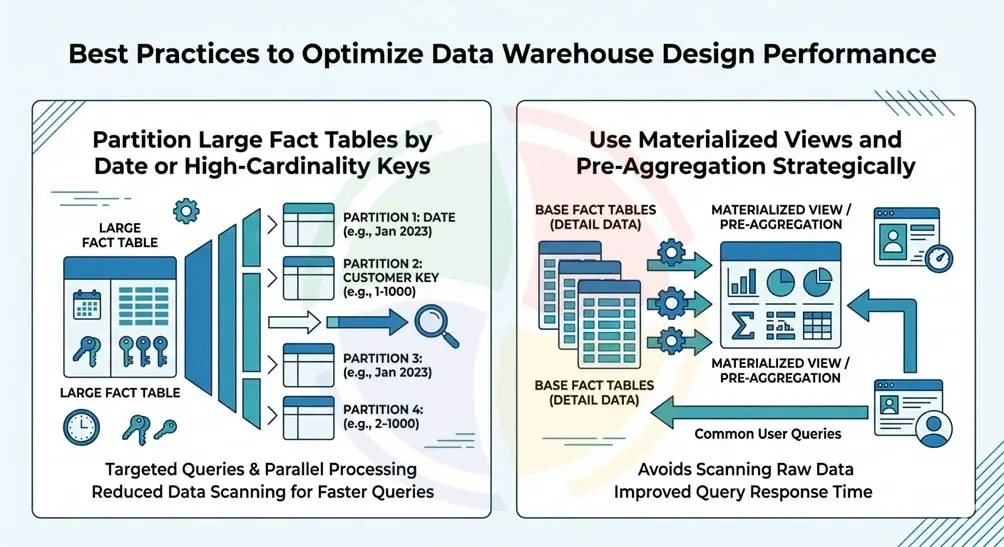

- Partition large fact tables by date or high-cardinality keys

- Use materialized views and pre-aggregation strategically

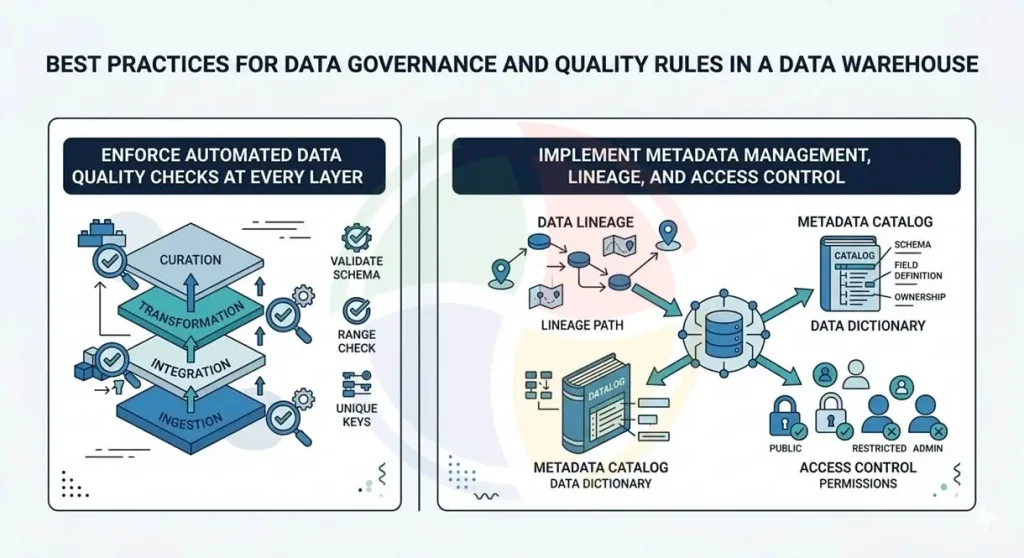

- Enforce automated data quality checks at every layer

- Implement metadata management, lineage, and access control

Why Does Data Warehouse Design Matter More Than Ever?

Your data warehouse design now determines whether your data strategy scales or collapses under its own complexity.

The global data warehousing market is valued at $39.18B in 2025 and projected to reach $103.49B by 2035, growing at a 10.2% CAGR. Investment is surging.

However, ROI depends heavily on applying the best practices for data warehouse design from the start.

Also, according to Forrester, over 25% of organizations estimate they lose more than $5 million annually due to poor data quality, with 7% reporting losses of $25 million or more. Many of those issues point to flawed modeling and weak data warehouse design during early implementation.

Another shift is accelerating the stakes: cloud adoption. With many warehouses now cloud-based, simply lifting legacy architectures into the cloud often reproduces old issues at scale.

Modern platforms demand hybrid architectures that support both batch pipelines and real-time streaming from day one.

Starting with a solid baseline for data warehouses ensures scalability, performance, and trustworthy analytics as data volumes grow.

The most expensive data warehouse is the one you build twice. Invest the first two weeks in dimensional modeling and layer architecture; it saves six months of refactoring later.

— Lead Data Architect, Aegis Softtech

Best Practices for Data Warehouse Architecture

Before designing schemas or pipelines, teams must define how data will flow through the warehouse. A strong data warehouse architecture isolates ingestion, transformation, and analytics workloads, so that failures in one stage do not cascade across the platform.

This architectural discipline becomes even more important when implementing modern cloud data warehouse solutions, where compute resources scale dynamically.

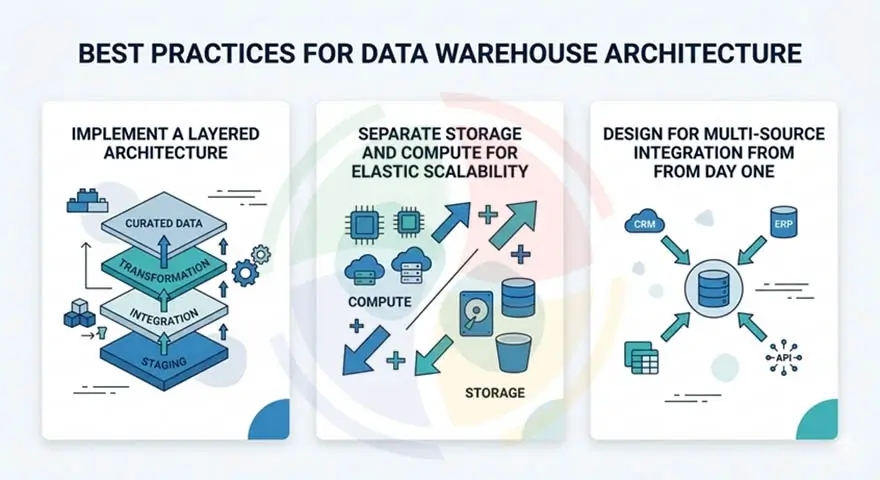

1. Implement a Layered Architecture (Raw → Staging → Curated)

One of the best practices for data warehouse implementation is a layered architecture. It separates the warehouse into ingestion, transformation, and analytics layers.

For example, if a CRM pipeline accidentally sends malformed data, the issue remains isolated in the staging layer rather than corrupting curated business tables.

Key implementation actions:

- Create a raw layer that stores source data exactly as received without transformations

- Use a staging layer to perform deduplication, type casting, and referential integrity validation

- Design a curated layer containing dimensional models optimized for BI tools

- Maintain immutable raw datasets to allow pipeline reprocessing when errors occur

- Version transformations so historical pipeline logic remains reproducible

2. Separate Storage and Compute for Elastic Scalability

Another critical principle in data warehouse best practices is separating storage and compute. Platforms like Snowflake, BigQuery, and Redshift Serverless use this decoupled architecture to scale workloads independently.

That means your nightly ETL jobs don’t compete with your executive dashboard queries at 9 a.m. Monday. Everyone stays happy—and your infrastructure bill stays predictable.

Elastic compute also enables smarter cost control through auto-suspend and auto-resume, ensuring warehouses only run when queries actually execute.

Key design actions:

- Implement a Raw → Staging → Curated pipeline structure to isolate ingestion, validation, and analytics layers.

- Keep the raw layer immutable so pipelines can be rebuilt without re-ingesting source data.

- Use decoupled compute clusters to separate ETL workloads from BI query workloads.

- Enable auto-suspend and auto-resume policies to eliminate idle compute costs.

3. Design for Multi-Source Integration from Day One

Few warehouses remain connected to only one data source. A modern analytics stack might ingest CRM data, product telemetry, payment transactions, marketing platforms, and third‑party APIs.

Designing the warehouse for multi‑source integration early avoids constant redesign when new systems appear.

Key design implementation actions:

- Decide between schema‑on‑read vs schema‑on‑write ingestion strategies depending on source variability

- Build a centralized metadata catalog (e.g., Apache Atlas, Alation, Snowflake Horizon) to track lineage across heterogeneous sources.

- Use change data capture (CDC) pipelines to ingest incremental updates from operational systems

- Maintain standardized ingestion patterns so new sources integrate without rewriting pipelines

Data Warehouse Schema Design Best Practices

Architecture defines how data flows into the warehouse. Schema design determines how efficiently analysts can query it.

Careful modeling decisions are a core component of modern data warehouse services, especially in environments with heavy BI workloads.

4. Default to Star Schema for Analytical Performance

Transactional systems often use highly normalized schemas. They minimize duplication but require many joins. That design works well for applications but performs poorly for analytical queries.

Star schemas solve this by organizing data around measurable events and descriptive dimensions.

Key schema implementation actions:

- Model fact tables to store measurable business events such as orders, clicks, or transactions

- Create dimension tables containing descriptive attributes like customer, product, region, or date

- Use denormalization to reduce join complexity for BI queries

- Reserve snowflake schemas for dimensions with very large hierarchical relationships

| Modeling Approach | Structure | Best Use Case |

| Kimball | Dimensional star schema | BI analytics |

| Inmon | Enterprise normalized warehouse | Enterprise integration |

| Data Vault | Hub‑link‑satellite | Highly scalable evolving data ecosystems |

5. Handle Slowly Changing Dimensions (SCDs) Deliberately

Customer attributes change constantly. A user changes their address. A product category is reclassified. A salesperson moves to another region.

If those changes overwrite previous values, historical reporting becomes inaccurate.

Slowly Changing Dimensions allow data warehouses to preserve history.

Key implementation actions:

- Define clear policies for SCD Type 1, Type 2, and Type 3 attribute handling

- Use SCD Type 2 for attributes where historical reporting accuracy matters

- Implement surrogate keys rather than relying on natural source keys

- Track effective start and end timestamps for versioned dimension records

Skipping SCD strategy is the fastest way to break historical reporting. We build SCD Type 2 into every dimension template by default and only override with Type 1 when the business explicitly agrees that history doesn’t matter.

— Senior Data Engineer, Aegis Softtech

6. Build Conformed Dimensions for Cross-Functional Consistency

When marketing says the company has 50,000 customers but finance reports 47,000, the problem usually isn’t the data. It has inconsistent definitions.

Conformed dimensions ensure every department uses the same definitions.

Key implementation actions:

- Create shared dimension tables reused across multiple fact tables

- Standardize key dimensions such as Customer, Product, Date, and Region

- Maintain a dimension registry documenting grain and attribute definitions

- Enforce consistent naming conventions across analytical models

ETL/ELT Pipeline Design Best Practices

Once schemas exist, pipelines move data from operational systems into the warehouse.

Reliable pipelines are what keep the warehouse continuously updated and trustworthy.

7. Prefer ELT Over ETL for Cloud-Native Warehouses

In traditional ETL pipelines, data is transformed before it enters the warehouse. That made sense when warehouse compute resources were expensive. Cloud data warehouse platforms changed that equation. Warehouses now provide massively parallel compute capable of running transformations directly.

Key implementation actions:

- Load raw data first, preserving the original dataset

- Execute transformations inside the warehouse using SQL models

- Store transformation logic in version‑controlled pipelines

- Build idempotent models so pipelines can run repeatedly without changing results

8. Implement Incremental Loading and Change Data Capture

Full refresh pipelines work for small datasets. At scale, they become slow and expensive.

Incremental loading processes only the rows that changed since the last pipeline run.

Key implementation actions:

- Implement incremental merge or upsert logic in transformation models

- Use CDC tools (Debezium, Fivetran, Streamkap) to capture inserts, updates, and deletes from source databases

- Track watermark columns such as _updated_at or _loaded_at

- Store pipeline checkpoints so ingestion jobs can resume after failures

Teams that skip incremental loading hit a wall at 50M rows. We design every pipeline with merge-on-key logic from the start, even when the initial dataset is small, because the migration cost of retrofitting later is ten times higher.

— Head of Data Engineering, Aegis Softtech

Best Practices for Data Warehouse Design to Optimize Performance

As warehouses grow, performance tuning becomes essential.

Experienced data warehouse developers apply several optimization techniques to keep analytical workloads fast.

9. Partition Large Fact Tables by Date or High-Cardinality Keys

Large fact tables often contain hundreds of millions of rows. Querying the entire dataset every time wastes compute resources.

Partitioning divides tables into smaller segments, so query engines read only the relevant portion of the data.

Key optimization actions:

- Partition large fact tables by date or timestamp columns

- Use clustering or sort keys for frequently filtered attributes

- Enable partition pruning to reduce query scan volume

- Avoid partitioning on low‑cardinality columns that create inefficient small partitions

10. Use Materialized Views and Pre-Aggregation Strategically

Some queries run hundreds of times per day. Executive dashboards often compute the same joins and aggregations repeatedly. Materialized views solve this by storing pre‑computed results.

Key implementation actions:

- Create materialized views for high‑frequency dashboard queries

- Pre‑aggregate metrics used by executive and operational reporting

- Schedule controlled refresh intervals based on data latency requirements

- Avoid using them on rapidly changing transactional tables

Data Governance and Quality Rules Best Practices

Even the best architecture fails if data quality cannot be trusted.

Data warehouse governance practices ensure datasets remain accurate, traceable, and secure.

11. Enforce Automated Data Quality Checks at Every Layer

Employees spend a lot of their time fixing bad data. The fix? Treat validation as part of the pipeline, not an afterthought.

Strong best practices for data warehouse design embed automated checks directly into transformation workflows using tools such as dbt tests, Great Expectations, or Soda.

If something breaks, the pipeline should fail before bad data reaches production.

Key quality check actions:

- Embed validation tests like not-null, unique, accepted_values, and referential integrity into every transformation layer

- Use pipeline gates so deployments fail before corrupted datasets reach the curated layer

- Run automated data quality checks during ingestion, transformation, and modeling stages

- Monitor quality metrics over time (freshness, completeness, duplication) to catch systemic issues early

12. Implement Metadata Management, Lineage, and Access Control

If someone asks, “Where did this metric come from?” and your team shrugs, that’s a governance problem.

Modern data warehouse schema design best practices emphasize strong metadata, lineage tracking, and role-based access. Without it, debugging analytics issues becomes archaeology—and regulatory audits become stressful.

Think of metadata as your warehouse’s instruction manual. It explains what data means, who owns it, and how it should be used.

Key implementation actions:

- Maintain a metadata catalog documenting table owners, refresh frequency, grain, and business definitions

- Implement end-to-end data lineage so every KPI can be traced back to its raw source tables

- Enforce RBAC policies with column-level and row-level security, especially for PII and PHI

- Apply data classification tags at ingestion to automatically flag sensitive fields and enforce compliance policies

You can’t govern what you can’t see. The first thing we build in any warehouse project isn’t a table; it’s a lineage graph that maps every source to every downstream dashboard.

— VP of Data Engineering & Governance, Aegis Softtech

What Are the Biggest Data Warehouse Design Mistakes to Avoid?

Even skilled professional teams occasionally fall into design traps that make warehouses difficult to maintain. Experienced data warehouse consultants typically watch for these issues during architecture reviews.

| Mistake | Why It Causes Problems | Recommended Fix |

| Over‑normalizing schemas | Too many joins slow analytical queries | Use star schema instead |

| Skipping staging layers | Makes debugging pipelines difficult | Always include raw and staging layers |

| No SCD strategy | Historical data becomes inaccurate | Implement SCD Type 2 by default |

| Inconsistent naming | Causes join errors and confusion | Enforce naming conventions |

| Undefined KPIs | Warehouse stores data without purpose | Design around business questions |

Build Your Data Warehouse Right the First Time

A data warehouse should scale with your business—not become the next rebuild project. The reality is that many warehouse initiatives face costly redesigns due to poor architecture, fragmented pipelines, or weak governance.

Following the right best practices for data warehouse design early prevents that.

At Aegis Softtech, our data warehouse services combine strategy and engineering, from data warehouse modernization to data warehouse migration across Snowflake, BigQuery, Redshift, and Synapse.

Whatever you need, our team brings 20+ years of experience to help you build it right the first time—and scale without friction.

H2: FAQs

1. What are data warehouse design best practices for cloud migration?

Adopt a layered architecture with raw, staging, and curated zones. Decouple storage from compute for elastic scalability and cost control.

2. Should I use a star schema or a Snowflake schema in my data warehouse?

A star schema is preferred for most analytical workloads. It minimizes joins and accelerates BI query performance.

3. What is the difference between ETL and ELT in data warehousing?

ETL transforms data before loading it into the warehouse. ELT loads raw data first, then transforms using the warehouse’s compute engine.

4. What is the Kimball vs. Inmon approach to data warehouse design?

Kimball uses bottom-up dimensional modeling with star schemas. Inmon uses top-down enterprise normalization with a central repository feeding data marts.

5. How do slowly changing dimensions affect warehouse design?

SCDs track historical changes in dimension attributes. SCD Type 2 preserves full history through versioned rows with effective dates.

6. How does partitioning improve data warehouse query performance?

Partitioning divides large tables into segments by date or key. Query engines scan only relevant partitions, reducing cost and latency.

7. What is a conformed dimension, and why does it matter?

A conformed dimension is a shared, standardized dimension table. It ensures consistent metrics across multiple fact tables and business reports.