Spark using Cassandra-Spark Datastax API’s

This tutorial explains how we can retrieve all data by integrating the maven dependency in Spark applications and processing with Spark Cassandra Datastax API.

Spark is the most popular parallel computing framework in Big Data development and on the other hand, Cassandra is the most well known No-SQL distributed database. Integrating these two technologies makes perfect sense when we want to analyze Big Data stored on Cassandra. We can load Cassandra data into spark to do some complex operations which otherwise is not possible in Cassandra and then saving the processed result back to Cassandra or some other output source.

In this blog, we will learn about reading, processing, converting and writing Cassandra rows in Spark using Cassandra-Spark Datastax API’s. This library makes you create Spark RDDs from Cassandra tables, compose Spark RDDs to Cassandra tables, and execute discretionary CQL queries in your Spark apps.

Retrieving data and integrating them with maven dependency and processing with Spark Cassandra Datastax API include complex operations. Contact us to learn about Cassandra rows and ways to derive output to Cassandra or some other output source with ease.

Once we create Spark RDDs/Dataframes from Cassandra tables, we can perform any analytics operation on top of it. You need to add below maven dependency in your spark application to use Cassandra-Spark datastax API’s.

Maven Dependency

Reading data from Cassandra

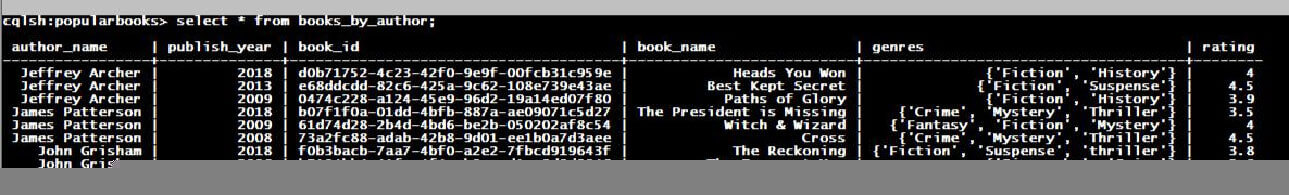

We will work with the Cassandra table below “books_by_author” in the popularBooks keyspace, which consists of details about books written by authors.

Cassandra Table

Problem Challenge

Retrieve author_name, book_name and rating of the books that are published after 2008 by author “James Patterson” using Spark Cassandra Datastax API

CQL: Select author_name, book_name, rating from books_by_author where author_name=’ James Patterson’ and publish_year > 2008;

Books Published After 2008 By James Patterson

Processing Cassandra Data

Retrieve all books with genre “crime”

Output format should be Author name: Book Name (publish year) [rating]

Example: James Patterson:Cross(2018)[3.8]

Books with Genre “Crime”

Converting Cassandra Data

While getting data from Cassandra database, we can convert it into either instance of case class or can create tuples from them using the below code snippet. In the first, I am creating a case class Book that contains “author_name, book_name, publish_year and rating” and instances of this case class will be created for each Cassandra row.

Cassandra Rows to Object

Cassandra Rows to Tuples: Method1

Cassandra Rows to Tuples: using As

Saving data back to Cassandra

Suppose we have some new table “latest_books” in which we want to save some processed output.

Saving data to Latest_Books

Latest_Books

Spark Dataframe with Cassandra

We can use spark sqlContext read/write method to create and save data frames from and to Cassandra database.

Creating Dataframe from Cassandra

Saving Dataframe to Cassandra

Apache Cassandra is a main open-source distributed database equipped for stunning accomplishments of scale, yet its data model requires a touch of planning for it to perform well. Spark is a distributed computation framework enhanced to work in-memory, and massively affected by ideas from functional programming dialects.

Spark with Cassandra delivers an incredible open-source Big Data analytic solution. It is actually what a Cassandra array needs to deliver real-time, ad-hoc questioning of operational data at scale. Thus, in this article, it is shown how we can fetch author, rating and book name utilizing Spark Cassandra Datastax API.