Your organization will benefit from a modern data warehouse that acts as the single source of truth for advanced analytics and AI initiatives.

However, implementing one successfully is more than merely selecting a platform. It demands clear business alignment, cost governance, architectural rigor, and a reliable execution partner who understands enterprise-scale complexity.

Here’s a walkthrough of what data warehouse implementation involves, the key steps we follow at Aegis Softtech, its components, challenges, and best practices.

What is Data Warehouse Implementation?

Data warehouse (DWH) implementation is a structured process of designing, building, deploying, and operationalizing a centralized data platform. The core purpose of this platform is to synthesize data from various sources into a stable environment.

It promotes decision enablement while supporting BI, regulatory needs, advanced analytics, and operational reporting.

DWH implementation can do the following for your enterprise:

- Translate business goals into analytical requirements

- Design scalable and extensible data models

- Build reliable ETL or ELT pipelines

- Select and implement cloud or hybrid architectures

- Embed governance and quality controls

- Enable downstream analytics and AI use cases

Effective implementation often begins with steadfast data warehouse consulting, where architectural choices, platform selection, tool picking, and cost models are evaluated before development starts.

Steps for Successful Data Warehouse Implementation

Implementing a data warehouse is a multi-phase initiative. A successful implementation follows a clearly defined lifecycle, where each step builds on the previous one. Skipping foundational activities often results in cost overruns and low adoption.

Here are the key data warehouse implementation steps our DWH developers at Aegis Softtech follow for your project:

1. Business & Analytics Requirement Discovery

The discovery phase documents the foundation for everything that follows, highlighting how your business will use the data. This is where our experienced data warehouse consulting experts help translate business questions into measurable analytics requirements.

Effective requirement discovery includes identifying:

- Operational and strategic decisions that require analytics

- KPIs and metrics used by leadership

- Reporting frequency and consumption patterns

- Stakeholder ownership and accountability for data domains

Rushing this step may render the DWH technically sound but also irrelevant to your business needs. We suggest investing time here as it yields higher adoption and faster ROI.

2. Source System Assessment

Next, after clearing your business needs, we evaluate the source systems. After all, operating with uniform data landscapes is a rare occurrence.

The source system assessment helps us examine:

- Source system types (CRM, ERP, operational databases, or SaaS)

- Data volume, velocity, variety, and update frequency

- Data quality issues, such as missing values or duplication

- Ownership boundaries and integration complexity

With our thorough assessment, you benefit from reduced surprises during integration. It also offers realistic timelines and costs.

3. Data Modeling & Schema Design

Our data modeling tactics help translate your business concepts into analytical structures that impact performance and scalability.

Key things we offer insights on:

- Choosing the right mode: dimensional, normalized, or hybrid

- Designing schemas suitable for reporting and analytics

A well-designed data model simplifies analytics for routine reporting needs.

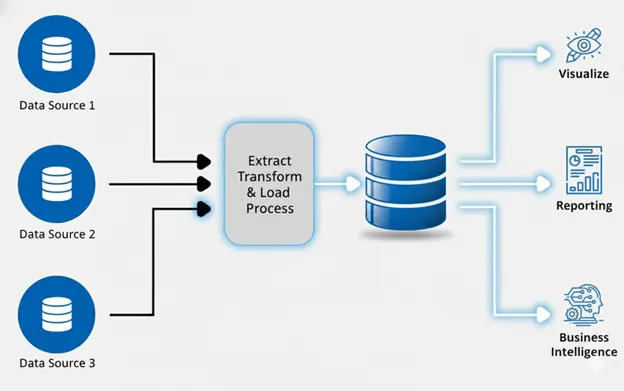

4. ETL/ELT Pipeline Design

ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) pipelines are responsible for sourcing, moving, validating, and transforming your data. Modern cloud data warehouse implementation encourages the use of ELT to push transformations into the warehouse layer.

Pipeline design focuses on:

- Reliability and fault tolerance

- Scheduling and dependency management

- Data validation and transformation logic

- Monitoring and error handling

We ensure pipelines are designed optimally to avoid operational blockages and long-term maintenance efforts.

5. Development & Integration

We turn your architectural plans into working systems during the development phase. We build ingestion pipelines, configure environments, integrate BI tools, and implement transformations.

Our experts follow iterative delivery models to deliver early dashboards or analytics. It validates assumptions and gathers feedback before full rollout.

6. Testing & Validation

Our User Acceptance Testing (UAT) ensures that your warehouse delivers accurate and performant data.

Validation typically covers:

- Data reconciliation with source systems

- Security and access control verification

- Performance testing under peak loads

- Aligning metrics and business definitions

It establishes trust among business users.

7. Deployment & Adoption

The final step, marking the transition from project to platform, is deployment. Adoption, however, does not happen automatically.

Successful adoption entails:

- Clear documentation and metric definitions

- Feedback loops for continuous improvement

You benefit greatly from our structured data warehouse services via proven accelerators and reduced delivery risk across these stages.

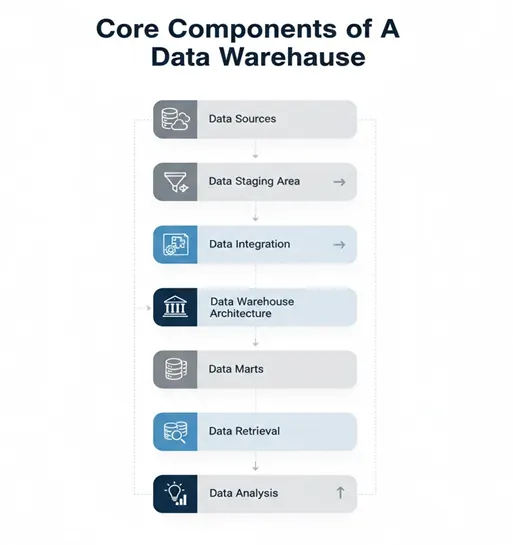

Core Components of a Data Warehouse

Let’s also walk you through the components of a data warehouse. It will help you connect the dots through the implementation process better as you learn how all the components come together to deliver the desired performance.

Data Sources

Data sources include operational systems, external datasets, streaming sources, and third-party platforms. To design reliable ingestion pipelines, understanding source behavior is imperative.

Data Staging Area

The staging area temporarily holds raw data. It supports validation, auditing, and deduplication before transforming or loading the data into analytical structures.

Data Integration

Strong integration processes ensure consistency across analytics and reports. It does so by handling transformations and standardization.

Data Warehouse Architecture

The warehouse stores historical data in a structured format, optimized for analytical queries. The choice of your data warehouse architecture impacts scalability, growth, performance, and cost.

Data Marts

Data marts offer views specific to your independent departments, such as finance or sales. These help improve usability without duplicating logic.

Data Storage

Storage refers to the technologies and methods used to store huge amounts in the warehouse. These strategies balance performance and cost.

Data Retrieval

It’s the process of accessing and querying data from engines, APIs, and semantic layers while abstracting complexity from your end users.

Data Analysis

BI tools and analytics platforms consume warehouse data to generate insights and recommendations.

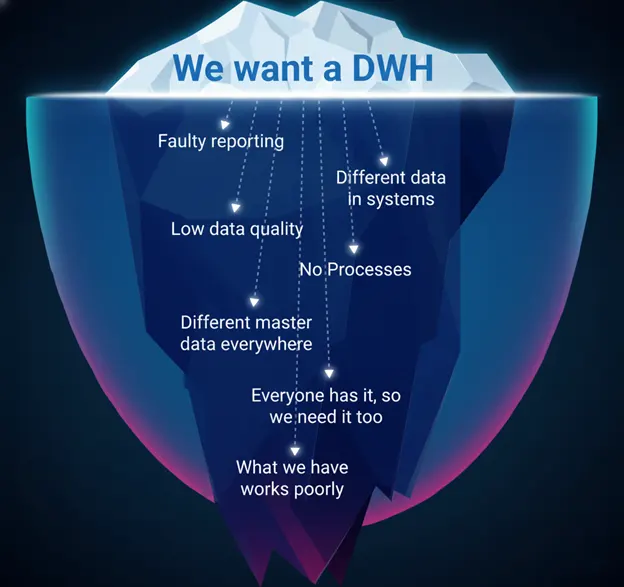

Common Challenges in Data Warehouse Implementation

When you sought data warehouse implementation services, a successful plan also poses obstacles in the process. We prepare your team and offer support in dealing with technical and organizational challenges.

The primary step to ensure your implementation is successful is knowing these challenges ahead of time. Read on.

| CHALLENGE | ROOT CAUSE | BUSINESS IMPACT | MITIGATION |

|---|---|---|---|

| Data quality and trust issues | Inconsistent source data and missing ownership. | It leads to low user adoption and loss of executive confidence. | Mitigate these challenges by implementing data quality checks and standardizing metric definitions early. |

| Unclear business requirements | Inadequate stakeholder alignment during discovery. | Results in extended timelines and limited ROI. | Mitigate them by conducting requirement discovery tied to your decisions and KPIs. |

| Performance blockages | Poor data modeling and a lack of workload optimization. | The outcome is user frustration and a negative impact on trust in analytics. | Tackle these by optimizing schemas and monitoring query performance. |

| Security and compliance gaps | Scanty access controls and data classification. | Results in regulatory risk and potential reputational damage. | Fight it by enforcing role-based access and auditing by design. |

| Data latency issues | Ill-designed ingestion and heavy pipelines. | It leads to missed opportunities and delayed responses. | Mitigate these challenges by designing pipelines according to latency requirements. |

| Lack of analytics and AI readiness | Stems from data models optimized only for reporting. | Offers on limited future value. | Ensure the models and pipelines are designed with analytics and AI use cases in focus. |

Data Warehouse Implementation Best Practices

We follow established data warehouse implementation best practices for streamlined processes and robust outcomes. These practices are a result of nearly two decades of experience in working with data architecture and technological advancements.

These practices are indispensable operating principles towards success. Here are our top recommended ones:

1. Design for Business Decisions

Decisions and outcomes form the basis for most high-performing data warehouses. In short, you must prioritize executive KPIs and operational metrics before designing schemas or pipelines.

When your data models reflect how your business measures success, the outcome often showcases an increase in analytics adoption. In turn, you witness a decrease in dependency on data engineering teams.

How You Benefit

- Better decision-making power

- Higher self-service adoption

- Lesser reporting rework

2. Incremental and MVP-Driven Delivery

Large data warehouse projects often result in delayed value and higher risks. Consequently, most successful organizations adopt an MVP-driven approach, delivering analytics for high-priority use cases early and expanding thereon.

Each phase operates on clear success criteria, such as reduced reporting time and improved data accuracy, in addition to technical completion.

How You Benefit

- Faster ROI

- Lower delivery risk

- Stronger stakeholder confidence

3. Embed Data Quality and Governance into the Architecture

The wisest decision while setting up your DWH is to embed data quality, lineage, and access controls into your models and pipelines from the outset. Redeveloping governance later is a costly endeavor. The most common of these include standardized definitions, audit trails, role-based access, and validation checks aligned with internal policies.

How You Benefit

- Trusted data

- Long-term platform stability

4. Optimize Cost and Performance Continuously

Cloud data warehouses offer elasticity, but when left unmanaged, they can lead to budget overruns. Best-performing teams actively monitor workloads and query patterns to align cost with business value. Cost optimization is an imperative operational discipline.

How You Benefit

- Predictable spend

- Sustained executive support

- Scalable growth

5. Design for Analytics and AI Evolution

Data warehousing supports modern reporting needs while aligning with tomorrow’s advanced analytics. This requires flexible models and integration readiness, rather than warehouses designed solely for quick static reporting.

How You Benefit

- Sustainable analytics foundation

- Higher long-term ROI

Tools & Technologies for Data Warehouse Implementation

Choosing suitable tools and technologies is an architectural decision that impacts how well your data warehouse performs with respect to the evolving business demands.

The easiest way to evaluate tools is their alignment with real-world use cases, operational stability, compatibility with existing cloud and data ecosystems, and governance support.

The Cloud Data Warehouse industry is projected to grow at a CAGR of 17.55% during the forecast period 2025 - 2035, going from 36.32 USD billion to 182.99 USD billion.

Read on to know about the significant set of tools and technologies we use (as required) during data warehouse implementation.

1. Data Warehouse Platforms

The first and one of the most crucial decisions is finalizing which data warehouse platform works best for your requirements. A DWH platform is where your structured and analytical data is stored.

In modern implementations, these platforms are often cloud-based and appropriate for high data volumes and multiple concurrent users.

Leading data warehouse platforms are:

Amazon Redshift

Amazon Redshift is a managed cloud data warehouse (CDWH) service, suitable for organizations that operate extensively within the AWS ecosystem. It extensively supports structured data analytics.

Google BigQuery

A fully serverless DWH that allows you to run analytical queries without managing infrastructure. BigQuery is often used for ad hoc analytics and large-scale data analysis.

Snowflake

Snowflake is a cloud-native data warehouse. Its separate storage and compute layers independently scale workloads and run multiple analytics use cases without resource contention.

Microsoft Azure Synapse Analytics

Azure Synapse combines data warehousing and big data analytics capabilities to support SQL-based analytics and Apache Spark workloads.

2. Data Orchestration and Workflow Management

Orchestration tools help manage dependencies, scheduling, retries, and monitoring of data pipelines growing in number and complexity. These tools ensure reliable data flow from source systems to the warehouse, helping enterprises maintain operational stability in data pipeline execution.

Commonly used orchestration tools include:

Apache Airflow

It is an open-source workflow orchestration platform suitable for managing complex data pipelines and dependencies.

Prefect

Designed for modern data stacks, Prefect is an orchestration tool that prioritizes observability and flexibility in complex workflows.

Azure Data Factory

Azure Data Factory offers built-in orchestration capabilities within the Azure ecosystem for data transformation and movement workflows.

AWS Step Functions

This tool helps coordinate workflows across leading AWS services, including data processing and analytics tasks.

3. ETL/ELT Tools

ELT and ETL tools support data extraction from various source systems, apply transformations, and load it into the DWH. ELT patterns are now predominant in modern implementations, enabling transformations to run within the warehouse for improved scalability.

Tool selection typically depends on multiple factors, such as source diversity and operational requirements.

Widely used ETL/ELT tools include:

Fivetran

It is a managed ELT platform that simplifies data ingestion from a myriad of SaaS and database sources.

Matillion

An ELT tool for visual pipeline development for companies that prefer CDWHs.

Talend

A comprehensive data integration platform sustaining hybrid environments and complex transformation logic.

Informatica

Informatica is an enterprise-grade data integration platform, preferred by large organizations.

4. Data Modeling Tools

Data modeling and semantic layer tools prove useful for consistently defining analytical structures and relationships. By doing so, they decrease ambiguity in reporting while supporting self-service analytics across your business teams.

These tools play a huge role in ensuring that analytics outputs remain consistent even as data usage scales.

Common data modeling tools include:

dbt (data build tool)

dbt is widely used for transforming data within CDWHs using modular, version-controlled models.

SAP PowerDesigner

SAP PowerDesigner supports conceptual and physical data modeling across all leading platforms.

LookML (Looker)

LookML provides a semantic layer that standardizes business metrics for reporting and analytics.

5. BI and Visualization Tools

Business intelligence and visualization tools enable you to explore data, create dashboards, generate reports, and study data. While their effectiveness depends on usability and alignment with workflows, standardizing one or two platforms reduces complexity.

Widely adopted BI tools include:

Tableau

Tableau is most popular among organizations for its interactive visual analytics and broad enterprise adoption.

Microsoft Power BI

Power BI is deeply integrated with the Microsoft ecosystem, making it the best choice for those already invested in it. The tool is heavily used for enterprise reporting.

Looker

Looker is a leading visualization tool that combines BI with a semantic modeling layer, offering you consistent analytics.

SAP Analytics Cloud

It integrates analytics with key predictive and planning capabilities within SAP environments.

6. Data Quality and Governance Tools

Data quality and governance tools will offer you visibility into data ownership and usage within the organization. These help maintain confidence in your data and build trust while supporting regulatory and internal controls.

Commonly used governance and quality tools include:

Collibra

It is a data governance platform that works best for policy management and lineage.

Microsoft Purview

Purview by Microsoft offers data governance and cataloging for Azure-based environments.

AWS Lake Formation

Lake Formation helps manage access control and governance for data lakes on AWS.

From Implementation to Insight: Partner with Aegis Softtech

The success of a data warehouse implementation is measured by how effectively it supports your decision-making prowess and scales with your business. The real win lies in aligning data warehouse architecture with your evolving analytical business needs without introducing unnecessary complexity or cost.

And this is exactly why you should hire data warehouse developers from Aegis Softtech.

At Aegis Softtech, we take a pragmatic approach to implementing DWHs. Our entire focus lies on understanding how your data will be used. It helps us design architectures that can grow with your demand and embed governance and quality into the foundation.

We offer unparalleled data warehousing services, ensuring your DWH remains reliable, even as reporting, analytics, and AI use cases expand over time.

Our data warehousing experts assess your existing data landscape and suggest the right implementation approach, delivering measurable business value.

Latest Insights

Frequently Asked Questions

The four key components of a data warehouse are:

1. A Central Database for storage

2. ETL tools for data integration

3. A Metadata Repository for data context

4. Data Analysis Tools for users to query and visualize information

The main steps to implementing a DWH in SQL Server are:

1. Define business requirements and objectives

2. Design the data warehouse architecture and model

3. Implement data integration (ETL/ELT pipelines)

4. Optimize performance

5. Test, deploy, and maintain

The main types of DWHs are Enterprise Data Warehouse (EDW), Data Marts, Operational Data Stores (ODS), and Cloud Data Warehouse (CDWH).

ETL, or Extract, Transform, Load, is an important process in data warehousing for moving raw data from various sources, cleaning and transforming it, and then loading it into a central repository for reporting and BI. It consolidates disparate data into a usable format for better decision-making.

While there is no single best database for a DWH, there are a few generally chosen for scalability and performance. The top databases include Snowflake, Google BigQuery, Azure Synapse Analytics, and Amazon Redshift.