Ever thought about what truly makes a company data-savvy? It’s not their ability to collect data but rather how they use it. The numbers are as clear as the day. According to a report by The Business Research Company, the global data warehousing market is expected to reach approximately $69.64 billion by 2029. Amid this fast-expanding data space, Snowflake has proven to be a clear leader. It has captured a remarkable 21.08% market share, surging as a top data warehousing solution.

But what makes Snowflake a favorite among forward-thinking businesses? It’s all in the Snowflake architecture.

Unlike the rigid, complex systems of its predecessors, Snowflake has a unique, cloud-native design. Businesses can thus scale effortlessly, move faster, and derive deeper insights. Are you intrigued by this platform and what it can do for you?

Join us to understand the crucial layers of the Snowflake data warehouse architecture, along with the right steps to adopt and implement it.

Snowflake Architecture: Storage, Compute, and Cloud Services

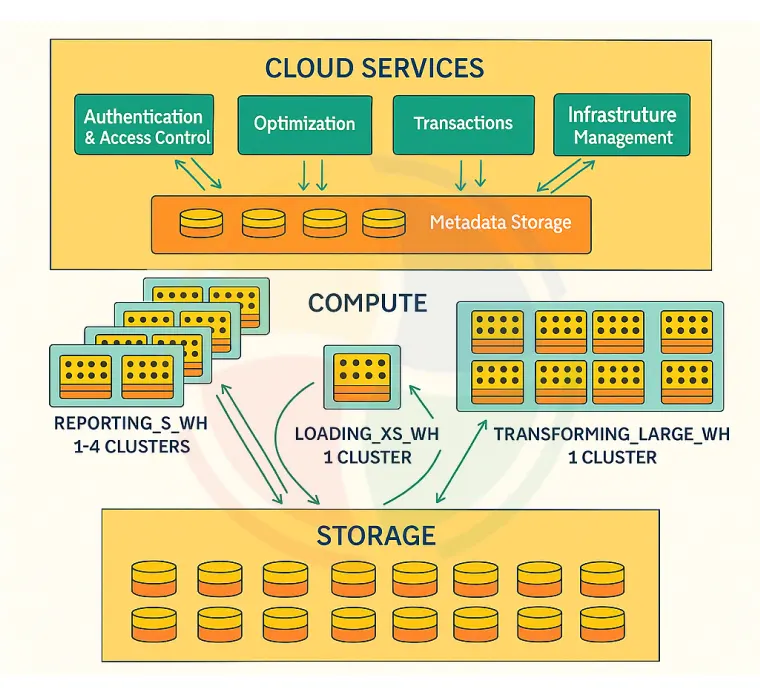

Snowflake’s rise to rank is rooted in its innovative, cloud-native architecture. Its design elegantly separates storage from compute, and is underpinned by intelligent cloud services. The decoupled design offers more elasticity, ease of use, and better performance.

As per a report by Markets and Markets, the global Cloud Data Warehouse market is expected to reach USD 12.9 billion by 2026, growing at a CAGR of 22.3% between 2021 and 2026.

Here is a detailed explanation of the three distinctive layers of this platform:

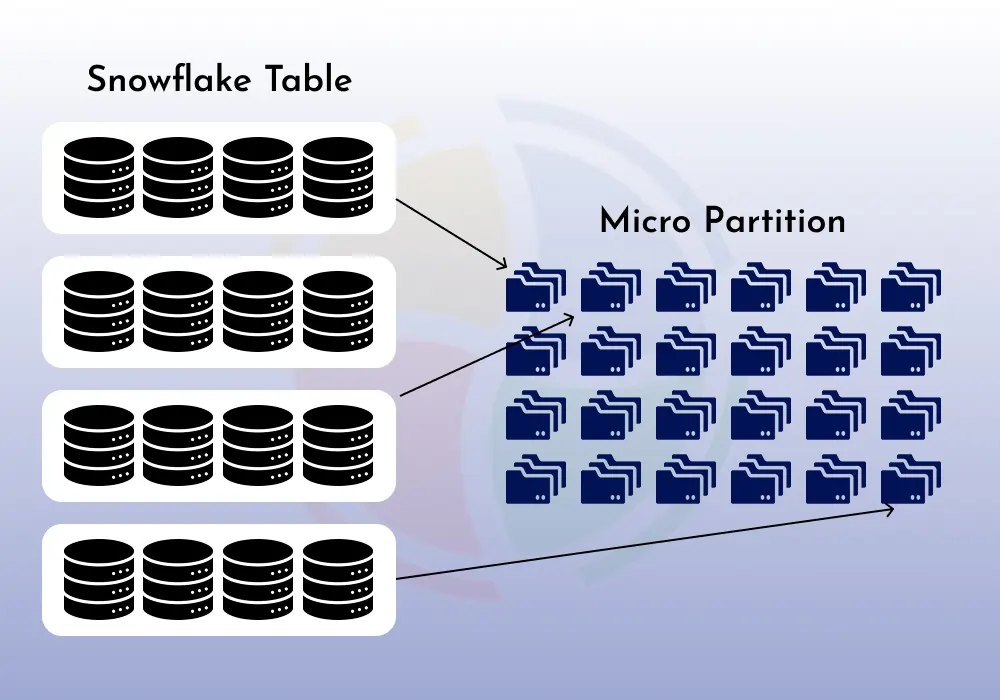

1. Database Storage Layer

A Snowflake database uses a centralized data repository within the scalable cloud storage. The storage spaces come from the top cloud providers, including AWS (S3), Google Cloud (Cloud Storage), or Microsoft Azure (Blob Storage).

All data loaded into Snowflake is automatically transformed and optimized into an internal proprietary columnar format, further segregated into micro-partitions. These micro-partitions form the core storage unit with the uncompressed data.

It carefully governs all its aspects, including the physical organization of data, underlying structure, file sizing, metadata management, comprehensive statistics, and compression algorithms. The storage layer is transparent with no direct customer interaction.

Two of the key features of this layer are infinite scalability and high availability.

Snowflake’s storage capacity continuously expands with your organization’s growing data. That’s not it. The platform enables the storage expansion without manual intervention or downtime.

Additionally, it automatically replicates and distributes your data for better fault tolerance and durability. Since it is an independent layer, you only pay for what you use.

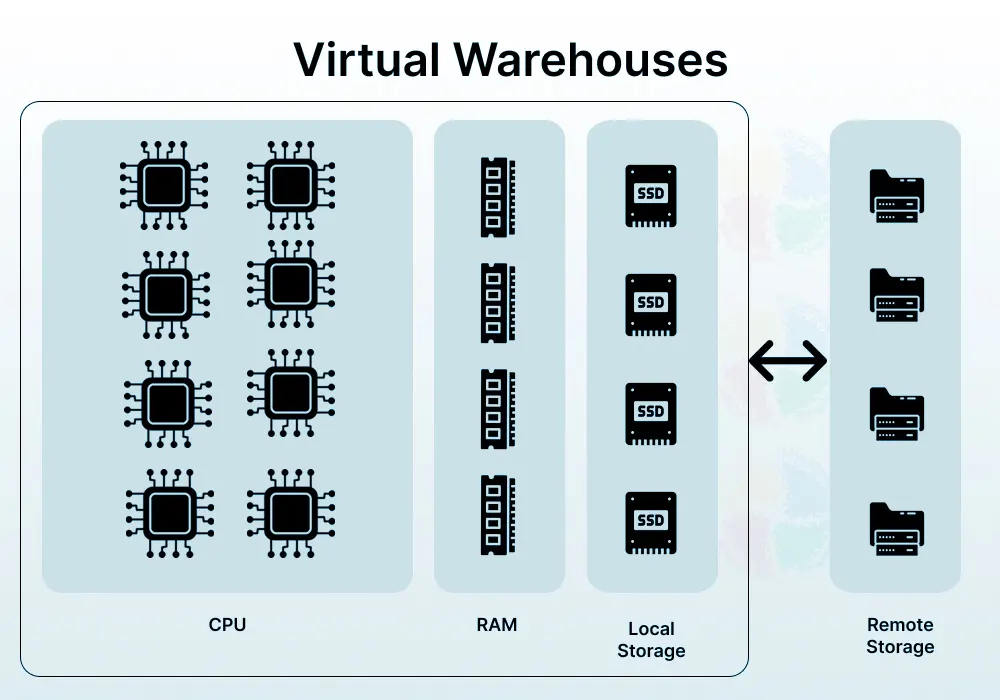

2. Compute/Query Processing Layer

The compute layer is where the query processing happens through virtual warehouses. A virtual warehouse (VWH) is a highly scalable and independent Massively Parallel Processing (MPP) compute cluster. Every VWH comprises a plethora of compute nodes that Snowflake provisions from the underlying infrastructure of the cloud provider.

These virtual warehouses are isolated and operate independently. They do not share their compute resources with another VWH, despite sharing a Snowflake account. Isolation is a big asset because it ensures predictable and consistent performance for varying workloads and concurrent users. It eliminates the common problem of resource contention.

Compute resources have unparalleled flexibility as VWHs can be started, resized, stopped, and even cloned as necessary. None of these actions impact the underlying data storage or hinder other running queries.

You can align your compute capacity and workload demands perfectly with this dynamic scalability for highly optimized performance and cost.

Again, you are only obligated to pay for the computing resources you actively consume. The price depends on the actual virtual warehouse usage, which is measured in ‘Snowflake credits’ per second.

Leverage Snowflake’s full potential to optimize your data architecture. Explore our Snowflake consulting services to design a robust and scalable solution tailored to your business.

3. Cloud Services Layer

The cloud services layer forms the brain of the architecture for central orchestration. It encompasses stateless services on a global level to manage and coordinate all activities throughout this platform. It presents several important functions to ensure efficient and smooth data warehouse services.

All virtual warehouses and users within a Snowflake account share this layer. The shareability feature, however, does not affect its resilience and scalability in managing huge quantities of concurrent requests. It heavily optimizes costs for typical workloads, as you only pay for this layer for daily VWH consumption exceeding 10%.

The layer manages key services, like:

Infrastructure Management

It oversees the fundamental cloud infrastructure and resource allocation.

Query Parsing & Optimization

It analyzes incoming SQL queries, uses metadata for query pruning, and generates execution plans.

Authentication & Security

It manages authentication by handling user logins, enforcing access control, and managing security policies.

Result Caching

The results of frequently executed queries are stored here to fast-track additional identical requests.

Metadata Management

It maintains in-depth information related to users, system configurations, and data objects (tables, views).

Access Control

Data security and related permissions are checked and enforced at different levels.

Transaction Management

Ensures ACID (Atomicity, Consistency, Isolation, Durability) compliance concerning data modifications.

Decoupled Snowflake architectural layers result in high performance, independent scaling, and cost optimization.

Snowflake’s Hybrid Architecture: Best of Both Worlds

The Snowflake data architecture is often described as a hybrid of traditional shared-disk and shared-nothing database architecture. Its hybrid nature enables your organization to manage data easily (with the shared-disk model) while remaining scalable and performance-oriented (with the shared-nothing architecture).

Snowflake is among the top five cloud data warehouse market leaders—a worthy attribution to its hybrid architecture.

It is among the most versatile and powerful cloud data warehousing solutions for modern analytics today.

Let’s understand its hybrid nature in depth.

An Overview of Shared-Disk Architecture

The shared-disk architecture is where all compute nodes within a cluster access a single, centralized storage layer. Compute nodes are servers with memory and CPUs. Data management becomes easy since all nodes can write and read the same information.

One of its limitations is performance bottlenecks, which occur when multiple nodes access the shared storage simultaneously, resulting in slower processing and contention. The limited shared disk capacity can also be a hurdle when you try to scale compute resources.

An Overview of Shared-Nothing Architecture

A shared-nothing architecture distributes both compute resources and data across independent nodes, wherein each node consists of its memory, local disk storage, and CPU.

Nodes communicate through a network to process the data residing on other nodes. It guarantees high-level scalability as adding nodes expands compute power and storage capacity.

Query processing and data management, however, can become more complex. It occurs because data distribution and processing coordination happen across multiple independent units.

Snowflake’s Hybrid Approach: Combining Both Models

After understanding both these approaches individually, let’s get to Snowflake’s hybrid approach and what that means for your organization.

Shared-Disk Aspect (Centralized Storage)

For data storage, Snowflake also uses a central data repository in the cloud. All compute nodes can access this single source of truth for simplifying data management. Snowflake manages the compression, metadata, and structuring of this central storage.

Shared-Nothing Aspect (Independent Compute)

Snowflake uses a shared-nothing approach via its VWHs for query processing. Each VWH is an independent MPP compute cluster encompassing its dedicated memory, temporary storage, and CPU. These operate independently without sharing compute resources with one another.

Upon query execution, Snowflake distributes the related data portions from the central storage to the nodes for parallel processing.

Steps to Implement and Adopt Snowflake

You must adopt a methodical and strategic approach to implement and adopt Snowflake for the successful migration of your data. It also empowers your users, integrates existing systems, and ultimately transforms your data landscape.

Snowflake—in their first fiscal quarter of 2026 (FY26)—reported 754 customers from the Forbes Global 2000 list. The company saw a net revenue retention of 124%.

Check out ways to ensure a smooth transition and gain maximum benefits for your organization.

1. Define Goals

First, identify and state the specific business issues you aim to target by adopting Snowflake. It begins by asking questions around current needs (both met and unmet) and the desired answers as outcomes. Outlining clear objectives solidifies the direction your implementation efforts should take.

2. Assess Data

It is imperative to study current data sources and conditions carefully. Identify all important data sources within the organization. Potentially, these sources entail your databases, spreadsheets, cloud services, and applications. Start analyzing the velocity, variety, and volume of your data.

3. Evaluate Fit

Understand how Snowflake’s working process, capabilities, and features align with your specific requirements according to the defined goals and data assessment. Consider factors like potential ROI, cost savings from reduced infrastructure, and improved analytical efficiency.

4. Plan Security

The smooth running of your Snowflake data architecture depends on the security policies and access controls you adopt. Ensure appropriate data governance and protection by defining user roles and permissions.

5. Choose the Right Snowflake Edition

There are many Snowflake editions, and you should carefully pick the one that best suits your organizational needs and budget. Consider different cloud providers, like AWS, Google Cloud, and Azure, and choose one based on your particular performance and compliance requirements.

6. Create Account

Set up a Snowflake account, establish security settings, and network policies as per the security plan.

7. Connect Data

Securely connect your identified data sources with your Snowflake environment via the right data ingestion methods. These could be native connectors, custom integrations, or third-party tools.

8. Load Data

Implement necessary strategies to load data into the platform. Ensure high performance and efficiency by choosing apt methods, including dedicated data loading tools or the COPY INTO command.

9. Transform Data

It has a powerful SQL engine that can be used for data cleaning, integration, or shaping within the platform. Tools like dbt are great for managing and versioning these transformations.

10. Build Reports

Connect Snowflake with all your existing BI tools and reporting platforms. Rebuilding or migrating existing dashboards and reports can also benefit from Snowflake’s performance and capabilities.

11. Monitor & Optimize

Keep monitoring query performance and system utilization to identify areas for optimization.

Optimizing Snowflake Architecture for Performance

You can optimize Snowflake architecture for performance by adopting key best practices.

Here are five of these you should know:

1. Optimize Query Design

Always be sure to write efficient SQL queries. It can be done by using LIMIT for exploration, joining on indexed columns, avoiding unnecessary data scans, and filtering data early in the query. Query profile analysis helps identify bottlenecks.

2. Right Virtual Warehouse Size

Your workload needs an appropriately sized warehouse. Identify the right warehouse size by monitoring query performance and consequently scaling up for demanding tasks or scaling down during idle periods. Separate warehouses for separate workloads also avoid resource contention and manage incurred costs.

3. Create Materialized Views

You can create materialized views for complicated and frequently run aggregations or queries. Snowflake pre-computes and stores results for faster retrieval of subsequent queries.

4. Use Clustering Keys

Use clustering keys for often grouped or filtered columns on large tables. Snowflake can prune data more effectively with this to reduce the quantity of data scanned. It significantly improves query performance.

5. Monitor & Profile Queries

Use its Query History and Profile feature to highlight slow-running queries and learn about their execution plans. You can get valuable knowledge of optimization areas, like full table scans or inefficient joins.

Leveraging Snowflake’s Architecture for Data Success

The Snowflake architecture is a technical marvel and truly powerful because of its decoupled layers for storage, compute, and cloud services. It is highly scalable and easy to use, dismantling traditional DWH limitations.

The goal is not just to store data, but to make it your strategic asset in business growth.

Now that you understand its architecture and how it supports your business, look for Snowflake implementation experts who can build the platform. A partner with proficiency to design, implement, and optimize the Snowflake architecture to align with your distinctive business needs.

At Aegis Softtech, we have SnowPro-certified experts with a deep knowledge of its architectural nuances, allowing us to build scalable and cost-efficient data solutions.

Let’s make it happen for you!

Connect with us to leverage reliable Snowflake development services and move toward data-driven excellence!

FAQs

Q1. Is Snowflake better than Databricks?

They both are impressive data platforms with excellence in distinct spheres. Snowflake is great at data warehousing, BI, and simple analysis. Databricks is apt for ML workloads, data science, and advanced analytics.

Q2. Is Snowflake an OLAP or OLTP?

It is an OLAP (Online Analytical Processing) platform with a few OTLP (Online Transaction Processing) use cases.

Q3. Is Snowflake an ETL tool?

Snowflake is not directly an ETL (Extract, Transform, Load) tool but a cloud-based data warehouse platform. It, however, integrates with different ETL tools for data transformation and integration.