Introduction: Scaling Data Workflows with CDE

In this digital phase, The demand for efficient data workflows, nevertheless, is increasing in tandem with the intricacy and magnitude of data. Businesses are more reliant on data than ever before. Know-how in data management, processing, and analysis is vital for all types of organizations, irrespective of scale.

The series of operations commencing with data extraction and progressing through its transformation and analysis is referred to as the “data workflow.” Data ingestion, purification, transformation, and visualization are a few of the processes that are commonly incorporated into these workflows. To ensure the accuracy and currency of the data, each procedure must be carefully devised and executed.

Process efficacy of data is of the utmost importance. With its assistance, organizations may process and analyze data more quickly, reduce expenses, and increase overall productivity. Inefficient workflows about data may give rise to setbacks, errors, and missed prospects. Organizations perpetually seek approaches to enhance their data workflows and achieve greater operational efficiency.

What is Data Engineering at Cloudera?

CDE, a powerful tool for enterprises that want to optimize the scalability of their data processes, adopts virtual clusters. Industry leader Cloudera’s CDE also provides a variety of features and functionalities to further refine and accelerate the Cloudera Data Engineering Service processes.

CDE is built upon Apache Spark and Apache Hadoop, two extensively utilized open-source frameworks designed for distributed data processing. Through the utilization of these technologies, CDE enables organizations to process significant volumes of data simultaneously, thus facilitating more efficient and expeditious data workflows.

A Comprehension of Virtual Clusters in Scaling Data Workflows

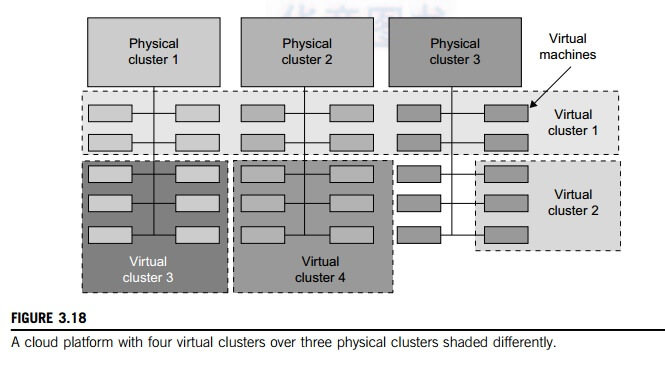

To comprehend how CDE facilitates the scalability of data workflows, knowledge of virtual clusters is required. Physical clusters, consisting of exclusive data processing devices or groups of computers, were historically the preferred approach for executing data operations. However, the flexibility and scalability of physical clusters are limited.

On the other hand, virtual clusters facilitate the sharing of a common set of resources among multiple logical clusters by providing an abstraction layer. By utilizing virtual clusters, which operate on the same underlying infrastructure, organizations can circumvent the need for specialized hardware for every cluster. As a result of the simplicity of deallocation and resource allocation, virtual clusters are highly scalable. Due to its scalability, organizations can adjust their data processing capacity without the need to procure additional equipment.

What is the Distinction between Virtual and Physical Clusters?

There are several significant distinctions between physical and virtual clusters:

The Ability to Scale

Due to the preset storage resources accessible in physical clusters, data workflow scalability is restricted. As an alternative, virtual clusters facilitate more efficient data processing for organizations by permitting them to scale up or down by demand.

Adaptability

Physical clusters are constructed to accommodate particular workloads or activities. In turn, they tend to underutilize resources. Simultaneously, virtual clusters are allocated to various duties or workloads dynamically. It facilitates increased resource utilization and overall efficiency.

Cost

Physical cluster installation requires substantial investments in infrastructure, site rental, and maintenance. In contrast, the virtual variant obviates the need for dedicated infrastructure, leading to cost savings for organizations.

The State of Isolation

Physical clusters ensure that distinct duties are completely isolated from one another. A burden has no discernible impact on the operation of another. On the contrary, virtual clusters share a common infrastructure, which, if not effectively managed, could potentially hinder performance.

Benefits of a Virtual cluster in the Context of Cloud computing

Involved in distributed activities, a virtual cluster is a collection of machines or instances hosted on the infrastructure of a cloud provider. The provisioning and administration of these virtual workstations are possible via a cloud management platform such as Cloudera Data Engineering.

When scaling data workflows, virtual clusters offer several advantages. They are particularly effective when integrated into cloud-based data warehousing solutions. These solutions can dynamically allocate compute resources to prevent bottlenecks during heavy reporting cycles.

Maximizing Resource Utilization

Having the capacity to adjust their operations in response to demand, these organizations optimize the allocation of resources. This contributes to the effective utilization of resources, resulting in cost reduction and enhanced overall efficiency.

The Concept of Elasticity

Additionally, virtual clusters are simple to expand or contract in response to workload requirements. Because of this elasticity, organizations can manage usage surges without having to invest in new hardware.

The Tolerance for Faults

The virtual nodes are equipped with fault-tolerant mechanisms such as automatic fail over and data replication. In the event of hardware or software malfunctions, data workflows remain uninterrupted.

Security and Isolation

Virtual clusters provide exceptional isolation between applications, ensuring the security of sensitive data. Furthermore, data can be further safeguarded through the implementation of security measures such as access controls and encryption in virtual clusters.

The Advantages of Utilizing CDE’s Robust Virtual Cluster Tool

The robust virtual cluster utility from Cloudera Data Engineering service provides numerous advantages for businesses seeking to scale their data workflows.

Streamlined Implementation

The front end of the CDE application for deploying and managing virtual clusters is intuitive. Complex and time-consuming manual procedures are unnecessary for the provisioning and configuration of virtual clusters.

Automated Scaling Mechanisms

This is the instrument developed by CDE; in addition to offering automated scaling capabilities, it also enables organizations to dynamically scale virtual clusters in response to fluctuations in workload. This feature enables efficient utilization of resources and prevents disruptions to data workflows caused by heavy demand.

Increased Efficiency

The instrument developed by CDE leverages the powerful Apache Hadoop and Apache Spark to facilitate rapid data processing. By distributing duties across virtual clusters, organizations can concurrently process vast quantities of data. The result will be a more efficient and rapid transfer of data throughout the organization.

Existing Workflows and Tool Integration

The seamless integration of CDE’s tool with organizations’ pre-existing data workflows and tools enables the continued utilization of prior investments. This eliminates the necessity for costly retraining or relocation.

Principal Capabilities and Features of the CDE tool for Scaling Data Workflows

The utility provided by CDE is intended to optimize data workflows by incorporating various features and functionalities that increase both efficiency and scalability.

Virtual Cluster Administration

A centralized dashboard is provided by the CDE utility for the management of virtual clusters. Virtual clusters are simple for organizations to provision, configure, and monitor, which guarantees optimal resource utilization and performance.

Automated Allocation of Resources

The automated resource allocation capabilities of the CDE utility enable organizations to allocate resources to virtual clusters in a dynamic manner, taking into consideration the demands of the workload. This guarantees the optimal utilization of resources and prevents any disruption to data workflows caused by limitations in resources.

Scheduling and Monitoring Tasks

The CDE tool possesses sophisticated functionalities for task scheduling and monitoring. By scheduling and monitoring data processing tasks, organizations can guarantee that they are carried out promptly and effectively.

Governance and Security of Data

CDE’s application offers comprehensive security and data governance functionalities, such as auditing, encryption, and access controls. This guarantees the protection of sensitive data and adherence to compliance obligations.

How CDE’s instrument stacks up against competing solutions on the market

Although there exist numerous solutions in the market that aim to scale data workflows, Cloudera Data Engineering’s robust utility presents several advantages:

Uncomplicated Integration

By integrating seamlessly with existing data workflows and tools, CDE’s tool obviates the necessity for expensive retraining or migration endeavors.

Superior Performance

Leveraging the capabilities of Apache Spark and Apache Hadoop, the CDE utility provides high-performance data processing. The utilization of virtual clusters to distribute duties permits organizations to concurrently process substantial quantities of data, thereby enhancing the speed and effectiveness of data workflows.

Strong Governance and Security of Data

By implementing the comprehensive data governance and security features of the CDE tool, compliance requirements are met and sensitive data is safeguarded. Capabilities for encryption, access control, and auditing are included.

Convenient User Interface

The interface of the CDE tool for deploying, managing, and monitoring virtual clusters is intuitive. This reduces the learning curve and facilitates the procedure for organizations.

Options for compatibility and integration with pre-existing data workflows and tools

The robust utility from Cloudera Data Engineering service is specifically engineered to integrate effortlessly with pre-existing data workflows and tools. It offers connectors for well-known databases, data centers, and streaming platforms and supports an extensive variety of data sources, including structured and unstructured data.

Furthermore, integration with other Cloudera and Hortonworks, including Cloudera Data Warehouse and Cloudera Machine Learning, is supported by the CDE tool. This capability empowers organizations to construct comprehensive data pipelines and capitalize on the complete capabilities of Cloudera’s analytics and data management platform.

Conclusion

Effectiveness is critical for achieving success in the realm of data workflows. As institutions confront the escalating quantities and intricacies of data, the requirement for solutions that are both scalable and efficient assumes critical importance. A variety of features and functionalities are incorporated into the robust utility for virtual clusters provided by Cloudera Data Engineering to optimize and simplify data engineering procedures. By capitalizing on the capabilities of virtual clusters and the adaptability of cloud computing, businesses can increase the efficacy and scope of their data workflows.